t(ea) for Two: Test between the Means of Different Groups - PowerPoint PPT Presentation

1 / 31

Title:

t(ea) for Two: Test between the Means of Different Groups

Description:

t(ea) for Two: Test between the Means of Different Groups When you want to know if there is a difference between the two groups in the mean – PowerPoint PPT presentation

Number of Views:112

Avg rating:3.0/5.0

Title: t(ea) for Two: Test between the Means of Different Groups

1

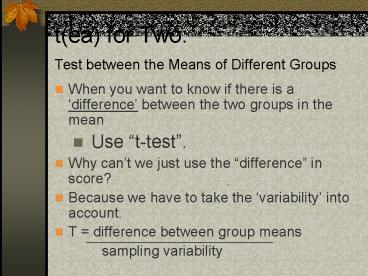

t(ea) for Two Test between the Means of

Different Groups

- When you want to know if there is a difference

between the two groups in the mean - Use t-test.

- Why cant we just use the difference in score?

- Because we have to take the variability into

account. - T difference between group means

- sampling variability

2

One-Sample T Test

- Evaluates whether the mean on a test variable is

significantly different from a constant (test

value). - Test value typically represents a neutral point.

(e.g. midpoint on the test variable, the average

value of the test variable based on past research)

3

Example of One-sample T-test

- Is the starting salary of company A (17,016.09)

the same as the average of the starting salary of

the national average (20,000)? - Null Hypothesis

- Starting salary of company A National average

- Alternative Hypothesis

- Starting salary of company A National average

4

- SPSS demo (employee data)

- Review

- Standard deviation Measure of dispersion or

spread of scores in a distribution of scores. - Standard error of the mean Standard deviation of

sampling distribution. How much the mean would be

expected to vary if the differences were due only

to error variance. - Significance test Statistical test to determine

how likely it is that the observed

characteristics of the samples have occurred by

chance alone in the population from which the

samples were selected.

5

z and t

- Z score standardized scores

- Z distribution normal curve with mean value z0

- 95 of the people in the given sample (or

population) have - z-scores between 1.96 and 1.96.

- T distribution is adjustment of z distribution

for sample size (smaller sampling distribution

has flatter shape with small samples). - T difference between group means

- sampling variability

6

Confidence Interval

- A range of values of a sample statistic that is

likely (at a given level of probability, i.e.

confidence level) to contain a population

parameter. - The interval that will include that population

parameter a certain percentage ( confidence

level) of the time.

7

Confidence Interval for difference and Hypothesis

Test

- When the value 0 is not included in the interval,

that means 0 (no difference) is not a plausible

population value. - It appears unlikely that the true difference

between Company As salary average and the

national salary average is 0. - Therefore, Company As salary average is

significantly different from the national salary

average.

8

Independent-Sample T test

- Evaluates the difference between the means of two

independent groups. - Also called Between Groups T test

- Ho ?1 ?2

- H1 ?1 ?2

9

Paired-Sample T test

- Evaluates whether the mean of the difference

between the paired variables is significantly

different than zero. - Applicable to 1) repeated measures and 2) matched

subjects. - Also called Within Subject T test Repeated

Measures T test. - Ho ?d 0

- H1 ?d 0

10

SPSS Demo

11

Analysis of Variance (ANOVA)

- An inferential statistical procedure used to test

the null hypothesis that the means of two or more

populations are equal to each other. - The test statistic for ANOVA is the F-test (named

for R. A. Fisher, the creator of the statistic).

12

T test vs. ANOVA

- T-test

- Compare two groups

- Test the null hypothesis that two populations has

the same average. - ANOVA

- Compare more than two groups

- Test the null hypothesis that two populations

among several numbers of populations has the same

average.

13

ANOVA example

- Example Curricula A, B, C.

- You want to know what the average score on the

test of computer operations would have been - if the entire population of the 4th graders in

the school system had been taught using

Curriculum A - What the population average would have been had

they been taught using Curriculum B - What the population average would have been had

they been taught using Curriculum C. - Null Hypothesis The population averages would

have been identical regardless of the curriculum

used. - Alternative Hypothesis The population averages

differ for at least one pair of the population.

14

ANOVA F-ratio

- The variation in the averages of these samples,

from one sample to the next, will be compared to

the variation among individual observations

within each of the samples. - Statistic termed an F-ratio will be computed. It

will summarize the variation among sample

averages, compared to the variation among

individual observations within samples. - This F-statistic will be compared to tabulated

critical values that correspond to selected alpha

levels. - If the computed value of the F-statistic is

larger than the critical value, the null

hypothesis of equal population averages will be

rejected in favor of the alternative that the

population averages differ.

15

Interpreting Significance

- plt.05

- The probability of observing an F-statistic at

least this large, given that the null hypothesis

was true, is less than .05.

16

Logic of ANOVA

- If 2 or more populations have identical averages,

the averages of random samples selected from

those populations ought to be fairly similar as

well. - Sample statistics vary from one sample to the

next, however, large differences among the sample

averages would cause us to question the

hypothesis that the samples were selected from

populations with identical averages.

17

Logic of ANOVA cont.

- How much should the sample averages differ before

we conclude that the null hypothesis of equal

population averages should be rejected. - In ANOVA, the answer to this question is obtained

by comparing the variation among the sample

averages to the variation among observations

within each of the samples. - Only if variation among sample averages is

substantially larger than the variation within

the samples, do we conclude that the populations

must have had different averages.

18

Three types of ANOVA

- One-way ANOVA

- Within-subjects ANOVA (Repeated measures,

randomized complete block) - Factorial ANOVA (Two-way ANOVA)

19

Sources of Variation

- Three sources of variation

- 1) Total, 2) Between groups, 3) Within groups

- Sum of Squares (SS) Reflects variation. Depend

on sample size. - Degrees of freedom (df) Number of population

averages being compared. - Mean Square (MS) SS adjusted by df. MS can be

compared with each other. (SS/df) - F statistic used to determine whether the

population averages are significantly different.

If the computed F static is larger than the

critical value that corresponds to a selected

alpha level, the null hypothesis is rejected.

20

Computing F-ratio

- SS Total Total variation in the data

- df total Total sample size (N) -1

- MS total SS total/ df total

- SS between Variation among the groups compared.

- df between Number of groups -1

- MS between SS between/df between

- SS within Variation among the scores who are in

the same group. - df within Total sample size - number of groups

-1 - MS within SS within/df within

- F ratio MS between / MS within

21

Formula for One-way ANOVA

22

Alpha inflation

- Conducting multiple ANOVAs, will incur a large

risk that at least one of them would be

statistically significant just by chance. - The risk of committee Type I error is very large

for the entire set of ANOVAs. - Example 2 tests .05 Alpha

- Probability of not having Type I error .95

- .95x.95 .9025

- Probability of at least one Type I error is

- 1-9025 .0975. Close to 10 .

- Use more stringent criteria. e.g. .001

23

Relation between t-test and F-test

- When two groups are compared both t-test and

F-test will lead to the same answer. - t2 F.

- So by squaring t youll get F

- (or square root of t is F)

24

Follow-up test

- Conducted to see specifically which means are

different from which other means. - Instead of repeating t-test for each combination

(which can lead to an alpha inflation) there are

some modified versions of t-test that adjusts for

the alpha inflation. - Most recommended Tukey HSD test

- Other popular tests Bonferroni test , Scheffe

test

25

Within-Subject (Repeated Measures) ANOVA

- SS tr Sum of Squares Treatment

- SS block Sum of Squares Block

- SS error SS total - SS block - SS tr

- MS tr SS tr/k-1

- MSE SS error/(n-1)(k-1)

- F MS tr/MSE

26

Within-Subject (Repeated Measures) ANOVA

- Examine differences on a dependent variable that

has been measured at more than two time points

for one or more independent categorical

variables.

27

Within-Subject (Repeated Measures) ANOVA

28

Factorial ANOVA

- T-test and One way ANOVA

- 1 independent variable (e.g. Gender), 1 dependent

variable (e.g. Test score) - Two-way ANOVA (Factorial ANOVA)

- 2 (or more) independent variables (e.g. Gender

and Academic Standing), 1 dependent variable

(e.g. Test score)

29

(End of Analytic Method I)

30

Main Effects and Interaction Effects

- Main Effects

- The effects for each independent variable on the

dependent variable. - Differences between the group means for each

independent variable on the dependent variable. - Interaction Effect

- When the relationship between the dependent

variable and one independent variable differs

according to the level of a second independent

variable. - When the effect of one independent variable on

the dependent variable differs at various levels

of second independent variable.

31

T-distribution

- A family of theoretical probability distributions

used in hypothesis testing. - As with normal distributions (or

z-distributions), t distributions are unimodal,

symmetrical and bell shaped. - Important for interpreting data gather on small

samples when the population variance is unknown. - The larger the sample, the more closely the t

approximates the normal distribution. For sample

greater than 120, they are practically

equivalent.