Business 260: Managerial Decision Analysis PowerPoint PPT Presentation

1 / 68

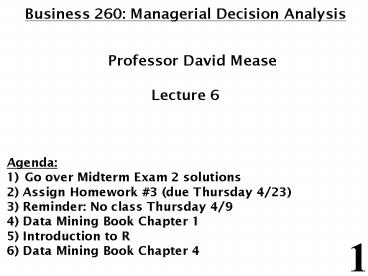

Title: Business 260: Managerial Decision Analysis

1

- Business 260 Managerial Decision Analysis

- Professor David Mease

- Lecture 6

- Agenda

- Go over Midterm Exam 2 solutions

- 2) Assign Homework 3 (due Thursday 4/23)

- 3) Reminder No class Thursday 4/9

- 4) Data Mining Book Chapter 1

- 5) Introduction to R

- 6) Data Mining Book Chapter 4

2

Homework 3 Homework 3 will be due Thursday

4/23 We will have our last exam that day after

we review the solutions The homework is posted

on the class web page http//www.cob.sjsu.edu/me

ase_d/bus260/260homework.html The solutions are

posted so you can check your answers http//www.

cob.sjsu.edu/mease_d/bus260/260homework_solutions.

html

3

No class Thursday 4/9 There is no class this

coming Thursday 4/9.

4

Introduction to Data Mining by Tan, Steinbach,

Kumar Chapter 1 Introduction (Chapter 1

is posted at http//www.cob.sjsu.edu/mease_d/bus26

0/chapter1.pdf)

5

- What is Data Mining?

- Data mining is the process of automatically

discovering useful information in large data

repositories. (page 2) - There are many other definitions

6

In class exercise 82 Find a different

definition of data mining online. How does it

compare to the one in the text on the previous

slide?

7

Data Mining Examples and Non-Examples

Data Mining -Certain names are more prevalent in

certain US locations (OBrien, ORurke, OReilly

in Boston area) -Group together similar

documents returned by search engine according to

their context (e.g. Amazon rainforest,

Amazon.com, etc.)

- NOT Data Mining

- -Look up phone number in phone directory

- -Query a Web search engine for information about

Amazon

8

- Why Mine Data? Scientific Viewpoint

- Data collected and stored at enormous speeds

(GB/hour) - remote sensors on a satellite

- telescopes scanning the skies

- microarrays generating gene expression data

- scientific simulations generating terabytes of

data - Traditional techniques infeasible for raw data

- Data mining may help scientists

- in classifying and segmenting data

- in hypothesis formation

9

- Why Mine Data? Commercial Viewpoint

- Lots of data is being collected and warehoused

- Web data, e-commerce

- Purchases at department/grocery stores

- Bank/credit card transactions

- Computers have become cheaper and more powerful

- Competitive pressure is strong

- Provide better, customized services for an edge

10

In class exercise 83 Give an example of

something you did yesterday or today which

resulted in data which could potentially be mined

to discover useful information.

11

- Origins of Data Mining (page 6)

- Draws ideas from machine learning, AI, pattern

recognition and statistics - Traditional techniquesmay be unsuitable due to

- Enormity of data

- High dimensionality of data

- Heterogeneous, distributed nature of data

AI/Machine Learning/ Pattern Recognition

Statistics

Data Mining

12

- 2 Types of Data Mining Tasks (page 7)

- Prediction Methods

- Use some variables to predict unknown or future

values of other variables. - Description Methods

- Find human-interpretable patterns that describe

the data.

13

- Examples of Data Mining Tasks

- Classification Predictive (Chapters 4,5)

- Regression Predictive (covered in stats

classes) - Visualization Descriptive (in Chapter 3)

- Association Analysis Descriptive (Chapter 6)

- Clustering Descriptive (Chapter 8)

- Anomaly Detection Descriptive (Chapter 10)

14

- Introduction to R

15

- Introduction to R

- For the data mining part of this course we will

use a statistical software package called R. R

can be downloaded from - http//cran.r-project.org/

- for Windows, Mac or Linux

16

- Downloading R for Windows

17

- Downloading R for Windows

18

- Downloading R for Windows

19

Reading Data into R Download it from the web

at www.stats202.com/stats202log.txt Set your

working directory setwd("C/Documents and

Settings/David/Desktop") Read it

in datalt-read.csv("stats202log.txt", sep"

",headerF)

20

Reading Data into R Look at the first 5

rows data15, V1 V2 V3

V4 V5 V6 V7 V8

V9 1 69.224.117.122 - -

19/Jun/2007003146 -0400 GET /

HTTP/1.1 200 2867 www.davemease.com 2

69.224.117.122 - - 19/Jun/2007003146 -0400

GET /mease.jpg HTTP/1.1 200 4583

www.davemease.com 3 69.224.117.122 - -

19/Jun/2007003146 -0400 GET /favicon.ico

HTTP/1.1 404 2295 www.davemease.com 4

128.12.159.164 - - 19/Jun/2007025041 -0400

GET / HTTP/1.1 200 2867

www.davemease.com 5 128.12.159.164 - -

19/Jun/2007025042 -0400 GET /mease.jpg

HTTP/1.1 200 4583 www.davemease.com

V10

V11

V12 1 http//search.msn.com/results.aspx?qmeasef

irst21FORMPERE2

Mozilla/4.0 (compatible MSIE 7.0 Windows NT

5.1 .NET CLR 1.1.4322) - 2

http//www.davemease.com/

Mozilla/4.0 (compatible MSIE 7.0

Windows NT 5.1 .NET CLR 1.1.4322) - 3

- Mozilla/4.0 (compatible

MSIE 7.0 Windows NT 5.1 .NET CLR 1.1.4322)

- 4

- Mozilla/5.0 (Windows U Windows

NT 5.1 en-US rv1.8.1.4) Gecko/20070515

Firefox/2.0.0.4 - 5

http//www.davemease.com/ Mozilla/5.0

(Windows U Windows NT 5.1 en-US rv1.8.1.4)

Gecko/20070515 Firefox/2.0.0.4 -

21

Reading Data into R Look at the first

column data,1 1 69.224.117.122

69.224.117.122 69.224.117.122 128.12.159.164

128.12.159.164 128.12.159.164 128.12.159.164

128.12.159.164 128.12.159.164

128.12.159.164 1901

65.57.245.11 65.57.245.11 65.57.245.11

65.57.245.11 65.57.245.11 65.57.245.11

65.57.245.11 65.57.245.11 65.57.245.11

65.57.245.11 1911 65.57.245.11

67.164.82.184 67.164.82.184 67.164.82.184

171.66.214.36 171.66.214.36 171.66.214.36

65.57.245.11 65.57.245.11 65.57.245.11

1921 65.57.245.11 65.57.245.11 73

Levels 128.12.159.131 128.12.159.164

132.79.14.16 171.64.102.169 171.64.102.98

171.66.214.36 196.209.251.3 202.160.180.150

202.160.180.57 ... 89.100.163.185

22

Reading Data into R Look at the data in a

spreadsheet format edit(data)

23

Working with Data in R Creating Data gt

aalt-c(1,10,12) gt aa 1 1 10 12 Some simple

operations gt aa10 1 11 20 22 gt

length(aa) 1 3

24

Working with Data in R Creating More Data gt

bblt-c(2,6,79) gt my_data_setlt-data.frame(attribute

Aaa,attributeBbb) gt my_data_set attributeA

attributeB 1 1 2 2 10

6 3 12 79

25

Working with Data in R Indexing Data gt

my_data_set,1 1 1 10 12 gt my_data_set1,

attributeA attributeB 1 1 2 gt

my_data_set3,2 1 79 gt my_data_set12,

attributeA attributeB 1 1 2 2

10 6

26

Working with Data in R Indexing Data gt

my_data_setc(1,3), attributeA attributeB 1

1 2 3 12

79 Arithmetic gt aa/bb 1 0.5000000 1.6666667

0.1518987

27

Working with Data in R Summary Statistics gt

mean(my_data_set,1) 1 7.666667 gt

median(my_data_set,1) 1 10 gt

sqrt(var(my_data_set,1)) 1 5.859465

28

Working with Data in R Writing Data gt

write.csv(my_data_set,"my_data_set_file.csv")

Help! gt ?write.csv

29

Introduction to Data Mining by Tan, Steinbach,

Kumar Chapter 4 Classification Basic

Concepts, Decision Trees, and Model

Evaluation (Chapter 4 is posted at

http//www.cob.sjsu.edu/mease_d/bus260/chapter4.pd

f)

30

- Illustration of the Classification Task

Learning Algorithm

Model

31

- Classification Definition

- Given a collection of records (training set)

- Each record contains a set of attributes (x),

with one additional attribute which is the class

(y). - Find a model to predict the class as a function

of the values of other attributes. - Goal previously unseen records should be

assigned a class as accurately as possible. - A test set is used to determine the accuracy of

the model. Usually, the given data set is divided

into training and test sets, with training set

used to build the model and test set used to

validate it.

32

- Classification Examples

- Classifying credit card transactions as

legitimate or fraudulent - Classifying secondary structures of protein as

alpha-helix, beta-sheet, or random coil - Categorizing news stories as finance, weather,

entertainment, sports, etc - Predicting tumor cells as benign

or malignant

33

- Classification Techniques

- There are many techniques/algorithms for

carrying out classification - In this chapter we will study only decision

trees - In Chapter 5 we will study other techniques,

including some very modern and effective

techniques

34

- An Example of a Decision Tree

Splitting Attributes

Refund

Yes

No

MarSt

NO

Married

Single, Divorced

TaxInc

NO

lt 80K

gt 80K

YES

NO

Model Decision Tree

Training Data

35

Applying the Tree Model to Predict the Class for

a New Observation

Test Data

Start from the root of tree.

36

Applying the Tree Model to Predict the Class for

a New Observation

Test Data

37

Applying the Tree Model to Predict the Class for

a New Observation

Test Data

Refund

Yes

No

MarSt

NO

Married

Single, Divorced

TaxInc

NO

lt 80K

gt 80K

YES

NO

38

Applying the Tree Model to Predict the Class for

a New Observation

Test Data

Refund

Yes

No

MarSt

NO

Married

Single, Divorced

TaxInc

NO

lt 80K

gt 80K

YES

NO

39

Applying the Tree Model to Predict the Class for

a New Observation

Test Data

Refund

Yes

No

MarSt

NO

Married

Single, Divorced

TaxInc

NO

lt 80K

gt 80K

YES

NO

40

Applying the Tree Model to Predict the Class for

a New Observation

Test Data

Refund

Yes

No

MarSt

NO

Assign Cheat to No

Married

Single, Divorced

TaxInc

NO

lt 80K

gt 80K

YES

NO

41

- Decision Trees in R

- The function rpart() in the library rpart

generates decision trees in R. - Be careful This function also does regression

trees which are for a numeric response. Make

sure the function rpart() knows your class labels

are a factor and not a numeric response. - (if y is a factor then method"class" is

assumed)

42

In class exercise 84 Below is output from the

rpart() function. Use this tree to predict the

class of the following observations a)

(Agemiddle Number5 Start10) b) (Ageyoung

Number2 Start17) c) (Ageold Number10

Start6) 1) root 81 17 absent (0.79012346

0.20987654) 2) Startgt8.5 62 6 absent

(0.90322581 0.09677419) 4) Ageold,young

48 2 absent (0.95833333 0.04166667) 8)

Startgt13.5 25 0 absent (1.00000000 0.00000000)

9) Startlt 13.5 23 2 absent (0.91304348

0.08695652) 5) Agemiddle 14 4 absent

(0.71428571 0.28571429) 10) Startgt12.5

10 1 absent (0.90000000 0.10000000) 11)

Startlt 12.5 4 1 present (0.25000000 0.75000000)

3) Startlt 8.5 19 8 present (0.42105263

0.57894737) 6) Startlt 4 10 4 absent

(0.60000000 0.40000000) 12) Numberlt 2.5 1

0 absent (1.00000000 0.00000000) 13)

Numbergt2.5 9 4 absent (0.55555556 0.44444444)

7) Startgt4 9 2 present (0.22222222

0.77777778) 14) Numberlt 3.5 2 0 absent

(1.00000000 0.00000000) 15) Numbergt3.5 7

0 present (0.00000000 1.00000000)

43

In class exercise 85 Use rpart() in R to fit a

decision tree to last column of the sonar

training data at http//www-stat.wharton.upenn.e

du/dmease/sonar_train.csv Use all the default

values. Compute the misclassification error on

the training data and also on the test data

at http//www-stat.wharton.upenn.edu/dmease/sonar

_test.csv

44

In class exercise 85 Use rpart() in R to fit a

decision tree to last column of the sonar

training data at http//www-stat.wharton.upenn.e

du/dmease/sonar_train.csv Use all the default

values. Compute the misclassification error on

the training data and also on the test data

at http//www-stat.wharton.upenn.edu/dmease/sonar

_test.csv Solution install.packages("rpart") l

ibrary(rpart) trainlt-read.csv("sonar_train.csv",he

aderFALSE) ylt-as.factor(train,61) xlt-train,16

0 fitlt-rpart(y.,x) 1-sum(ypredict(fit,x,type"

class"))/length(y)

45

In class exercise 85 Use rpart() in R to fit a

decision tree to last column of the sonar

training data at http//www-stat.wharton.upenn.e

du/dmease/sonar_train.csv Use all the default

values. Compute the misclassification error on

the training data and also on the test data

at http//www-stat.wharton.upenn.edu/dmease/sonar

_test.csv Solution (continued) testlt-read.csv(

"sonar_test.csv",headerFALSE) y_testlt-as.factor(t

est,61) x_testlt-test,160 1-sum(y_testpredic

t(fit,x_test,type"class"))/ length(y_test)

46

In class exercise 86 Repeat the previous

exercise for a tree of depth 1 by using

controlrpart.control(maxdepth1). Which model

seems better?

47

In class exercise 86 Repeat the previous

exercise for a tree of depth 1 by using

controlrpart.control(maxdepth1). Which model

seems better? Solution fitlt-

rpart(y.,x,controlrpart.control(maxdepth1)) 1

-sum(ypredict(fit,x,type"class"))/length(y) 1-s

um(y_testpredict(fit,x_test,type"class"))/ leng

th(y_test)

48

In class exercise 87 Repeat the previous

exercise for a tree of depth 6 by using

controlrpart.control(minsplit0,minbucket0,

cp-1,maxcompete0, maxsurrogate0,

usesurrogate0, xval0,maxdepth6) Which model

seems better?

49

In class exercise 87 Repeat the previous

exercise for a tree of depth 6 by using

controlrpart.control(minsplit0,minbucket0,

cp-1,maxcompete0, maxsurrogate0,

usesurrogate0, xval0,maxdepth6) Which model

seems better? Solution fitlt-rpart(y.,x, cont

rolrpart.control(minsplit0, minbucket0,cp-1,

maxcompete0, maxsurrogate0, usesurrogate0,

xval0,maxdepth6)) 1-sum(ypredict(fit,x,type

"class"))/length(y) 1-sum(y_testpredict(fit,x_t

est,type"class"))/ length(y_test)

50

- How are Decision Trees Generated?

- Many algorithms use a version of a top-down or

divide-and-conquer approach known as Hunts

Algorithm (Page 152) - Let Dt be the set of training records that reach

a node t - If Dt contains records that belong the same class

yt, then t is a leaf node labeled as yt - If Dt contains records that belong to more than

one class, use an attribute test to split the

data into smaller subsets. Recursively apply the

procedure to each subset.

51

- An Example of Hunts Algorithm

Dont Cheat

52

- How to Apply Hunts Algorithm

- Usually it is done in a greedy fashion.

- Greedy means that the optimal split is chosen

at each stage according to some criterion. - This may not be optimal at the end even for the

same criterion. - However, the greedy approach is computational

efficient so it is popular.

53

- How to Apply Hunts Algorithm (continued)

- Using the greedy approach we still have to

decide 3 things - 1) What attribute test conditions to consider

- 2) What criterion to use to select the best

split - 3) When to stop splitting

- For 1 we will consider only binary splits for

both numeric and categorical predictors as

discussed on the next slide - For 2 we will consider misclassification error,

Gini index and entropy - 3 is a subtle business involving model

selection. It is tricky because we dont want to

overfit or underfit.

54

- 1) What Attribute Test Conditions to Consider

(Section 4.3.3, Page 155) - We will consider only binary splits for both

numeric and categorical predictors as discussed,

but your book talks about multiway splits also - Nominal

- Ordinal like nominal but dont break order

with split - Numeric often use midpoints between numbers

OR

Taxable Income gt 80K?

Yes

No

55

- 2) What criterion to use to select the best

split (Section 4.3.4, Page 158) - We will consider misclassification error, Gini

index and entropy - Misclassification Error

- Gini Index

- Entropy

56

- Misclassification Error

- Misclassification error is usually our final

metric which we want to minimize on the test set,

so there is a logical argument for using it as

the split criterion - It is simply the fraction of total cases

misclassified - 1 - Misclassification error Accuracy (page

149)

57

In class exercise 88 This is textbook question

7 part (a) on page 201.

58

- Gini Index

- This is commonly used in many algorithms like

CART and the rpart() function in R - After the Gini index is computed in each node,

the overall value of the Gini index is computed

as the weighted average of the Gini index in each

node

59

- Gini Examples for a Single Node

P(C1) 0/6 0 P(C2) 6/6 1 Gini 1

P(C1)2 P(C2)2 1 0 1 0

P(C1) 1/6 P(C2) 5/6 Gini 1

(1/6)2 (5/6)2 0.278

P(C1) 2/6 P(C2) 4/6 Gini 1

(2/6)2 (4/6)2 0.444

60

In class exercise 89 This is textbook question

3 part (f) on page 200.

61

- Misclassification Error Vs. Gini Index

- The Gini index decreases from .42 to .343 while

the misclassification error stays at 30. This

illustrates why we often want to use a surrogate

loss function like the Gini index even if we

really only care about misclassification.

A?

Gini(N1) 1 (3/3)2 (0/3)2 0

Gini(N2) 1 (4/7)2 (3/7)2 0.490

Yes

No

Node N1

Node N2

Gini(Children) 3/10 0 7/10 0.49 0.343

62

- Entropy

- Measures purity similar to Gini

- Used in C4.5

- After the entropy is computed in each node, the

overall value of the entropy is computed as the

weighted average of the entropy in each node as

with the Gini index - The decrease in Entropy is called information

gain (page 160)

63

- Entropy Examples for a Single Node

P(C1) 0/6 0 P(C2) 6/6 1 Entropy 0

log 0 1 log 1 0 0 0

P(C1) 1/6 P(C2) 5/6 Entropy

(1/6) log2 (1/6) (5/6) log2 (1/6) 0.65

P(C1) 2/6 P(C2) 4/6 Entropy

(2/6) log2 (2/6) (4/6) log2 (4/6) 0.92

64

In class exercise 90 This is textbook question

5 part (a) on page 200.

65

In class exercise 91 This is textbook question

3 part (c) on page 199.

66

- A Graphical Comparison

67

- 3) When to stop splitting

- This is a subtle business involving model

selection. It is tricky because we dont want to

overfit or underfit. - One idea would be to monitor misclassification

error (or the Gini index or entropy) on the test

data set and stop when this begins to increase. - Pruning is a more popular technique.

68

- Pruning

- Pruning is a popular technique for choosing

the right tree size - Your book calls it post-pruning (page 185) to

differentiate it from prepruning - With (post-) pruning, a large tree is first

grown top-down by one criterion and then trimmed

back in a bottom up approach according to a

second criterion - Rpart() uses (post-) pruning since it basically

follows the CART algorithm - (Breiman, Friedman, Olshen, and Stone, 1984,

Classification and Regression Trees)