Types of paper (CS Education) PowerPoint PPT Presentation

Title: Types of paper (CS Education)

1

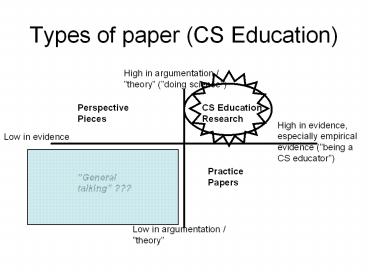

Types of paper (CS Education)

High in argumentation / theory (doing science)

PerspectivePieces

CS Education Research

High in evidence, especially empirical evidence

(being a CS educator)

Low in evidence

Practice Papers

General talking ???

Low in argumentation / theory

2

Two (2½½) important positions

- Carl Popper (1959)

- Scientific hypotheses are by definition refutable

(Kant Analytical apostori). We try to find ways

of falsifying it, but if we dont succeed, the

hypothesis is strengthened. - Other findings and claims arequasi-science

- But is this rigor necessary for practical

purposes? - Thomas Kuhn (1970)

- Science and society always have a set basic

believes that are taken for grated (paradigms)

we are normally not able to break out of these

in our thinking and in our science. - During history, there has been a lot of shifts of

paradigms. - Others claim that there is a countinous stuggle

between different paradigms, and often unclear

which ones are the most dominant today (e.g.

Brian Fay Contemporary Philosophy of Social

Science). - gt

- Kant Syntetic apriori stable, obvious

knowledge, totally the paradigms - Kuhn paradigm shifts

- Fay and others translation of paradigms,

parallel, compeating paradigms

3

Scientific method vs. Method of Science

- Some reseachers distinguish between

- Scientific Method in the Popper sense which

in fact must conclude that we never know for

sure. - Metod of Science in a less strict sense, where

articulation / formulation of the hypothesis may

play a crucial role, and the results are well

enough established (supported by evidence) to be

used in practice.

4

Method of Science inductive vs deductive

approach

- Induction

- questions

- Identification of regularities

- general theory

- utility

- Practical questions, good enough answers

- Often qualitative reseach

- Deduction

- answers

- method of testing hypothesis

- scientific method

- replicability/repeatability

- Strengthen the belief on the answer I think I

have - Quantitative research

Note

5

Hard vs. soft aspects of a science

- Biochemistry vs. social medicine

- Natural vs. social geography

- Electronics vs. sosioinformatics

- Child psychology vs. philosophy of eduacation

- This means the different methodology is used in

different parts of a disipline. - Is informatics one or two sciences?

- Some claim that only the hard part is real

science, indisputable knowledge.

6

Epistemology (theory of knowledge)

- The field includes questions like

- what is knowing?

- how do we know what we (think we) know?

- the backgroud for scientific research

- is knowledge sharable?

7

The more philosopical questions (not adapted

from the book)

- What is truth (if any) ?

- Is there any objective truth, independent,

outside us? - Or are all truths subjective, constructed

within us? - Are they, then, sharable at all?

- Must we trust a kind of intersubjective, common

understanding, or a kind of things inner

beeing (German Sein) ref. Aristotle.

8

Theories

Empirical laws repeatable, quantitative

Explanatory theoriesoften fewer, but deeper

experiments/interviews, evidence based.

and/ormany dimentions

General talking with no evidence, general

description

natural social

science cause and effect.

9

Models

- of something else

- simplified version of something, i.e. things are

left out (may not be a value-free choise) - types of model (e.g.)

- physical models

- mental models

- written models

- graphical models

- purpose of a model (e.g.)

- perform a study, i.e. to check a theory

- explain a procedure

- explain a relationship, i.e. between things

- explain / illustrate a theory

10

Note

- A theory, a scientific law or a model is

different from the phenomena it describes, and

is, as such not observable (David Hume). - Some theories are deterministic, in the sense

that it must be such or such - Others are probalistic

- Others pre-supposes that man may behave

different, that man has a kind of free will.(A

stone cannot decide i.e. not to fall, but a

person may decide i.e. not to drive a car). - The perfect objectivity is never achievable

gt would suppose that we are completely outside

the world we are observing.

11

Conceptual frameworks

- Research and knowledge is always based on a basis

of assumtions that we take for granted (axioms,

paradigms, values). - political assumtions

- ideological assumtions

- scientific assumtions (both basis and

methodology) - historical example geocentric, heliosentric view

of the world - e.g. is the UN declaration of human rights a

consequence of a Western way of thinking? Would

the declaration have been different if re-written

today? - Critical theory / equiry / thinking is a research

tradition trying to question these assumtions

(see F P, part 2, ch 2) - Frankfurter-schule, J. Habermas and others.

- interprenting the assumtions as value-loaded

- what questions are important to ask?

- are there hidden agendaes (cf. the hidden

curriculum in pedagogics). - often political background

- touches the questions on objectivity/absoluteness

vs. subjectivity/relativity in science and

philosophy. - but is ideology critics in itself an ideology

? (Ideologikritikk som ideologi, Sigurd

Skirbekk, UiO)

12

Including Critical Enquiry as a research method

.

- Thinking of critical enquiry as an alternative

approach part 2, ch. 2 tries to summarize

different methods into (note table not taken

from the book)

Scientific / scientistic approach Objective / positivistic Finding emirical laws

Interpretivistic approach Subjective Finding expanatory theories

Critical approach Emancipatory Re-interpreting, changing the way we are used to think

- Note The critical theory/critical equiry

tradition is in itself a theory loaded and value

loaded tradition, based upon a marxist (and to

some extent Freudian) view of the world. - I.e. Critics of existing structures, etc. is

not neccesarily based upon all assumtions from

the Critical Enqiury school.

13

Including Critical Enquiry as a research method

.

- Thinking of critical enquiry as an alternative

approach part 2, ch. 2 tries to summarize

different methods into (note table not taken

from the book)

Scientific / scientistic approach Objective / positivistic Finding emirical laws

Interpretivistic approach Subjective Finding expanatory theories

Critical approach Emancipatory Re-interpreting, changing the way we are used to think

- Note The critical theory/critical equiry

tradition is in itself a theory loaded and value

loaded tradition, based upon a marxist (and to

some extent Freudian) view of the world. - I.e. Critics of existing structures, etc. is

not neccesarily based upon all assumtions from

the Critical Enqiury school.

14

A possible parallel from systems development (not

taken from the book)

- Is analyzing and constructions of information

systems we see the process mainly as - an objective information analysis, the

informationtheoretical school - a joint optimization of social and technical

possibilities, socio-technical development - a workers / union struggle (fagpolitisk

tradisjon) between the interest of - the workers

- the owners

- the management

- Please note the parallels with research

traditions. - (More on this Jørgen Bansler System

development theory and history in a

Scandinavian perspective (in Danish)).

Objective, one answer, Harmony

Subjective, Harmony

Conflict

15

A short discussion (discuss one of)

- Is systems development value neutral ?

- When designing systems for an organization, who

should you represent / have solidarity with? - the bosses

- the workers?

- the ones agreeing with you?

- Are there technological aspects (i.e. in CS

itself) which are relevant to this? - Pedagogical aspects of this way of thinking ?

- for informatics education

- for systems development within and for

organizations - Additional comments from anybody?

16

Method of Science inductive vs deductive

approach (repeated from ch. 1)

- Induction

- questions

- Identification of regularities

- general theory

- utility

- Practical questions, good enough answers

- Often qualitative reseach

- Deduction

- answers

- method of testing hypothesis

- scientific method

- replicability/repeatability

- Strengthen the belief on the answer I think I

have - Quantitative research

Note

17

Empirical studies 1-2-3

1 Figure out what the question is

with operationalization

2 Decide what sort of evidence that will satisfy

you

ref. confidence interval in statistics. To be

stated before the data gathering!

3 Choose a technique that will produce the

required evidence

or weaken it .. Negative answers may be good

answers!

18

Research process

- Top-down ?

- Bottom-up ?

- Middle-up ?

- The hermeneutic circle?

Additional noteTop-down in systems development

reality or illusion?

Generalizations / Theory / Top

Concretizations / Praxis / Bottom

19

Kolbs learning circle

ConcreteExperience

Testing implications of new concepts in new

situations

Observations Reflections

Formation of abstract concepts and generalizations

- A central model in the theory of organizational

learning. - Continous improvement

- May also be used as a research model

- May be seen as a development of the hermeneutic

circle

20

A note on process vs. product

- The product will have a linear (page 1, 2, ..)

and hierarchical (ch. 2, 2.1, 2.1.1 etc.)

structure. - The process will certainly be neither linear nor

hierarchical

http//www.csuohio.edu/writingcenter/writproc.html

(13.03.04)

21

Title problem description

- The problem description may also be the title,

but not vice versa - The problem description is often a question that

you want to answer. - Be aware to check the correspondance !

- Both must be unbiased, precise, not giving the

answer. - Why do Informatics students need to know a lot

about hardware? - Is Linux a better alternative?

- Proposals, new ideas, etc. may be allowed but

must, at least have a discussion about pros and

cons, using relevant references if applicable. - If you dont know what you are doing, dont do

it in a big scale (from Tom Gilb Principles of

Software Development)

22

Title / problem / result, I

Ive made up my mind already, dont desturb me

with facts

23

Title / problem / result, II

This is my conclusion Give me some data to prove

it !

24

Reliability and validity questions

- Validity questions

- Construct, between construct (concept) and

operational variables - Internal i.e. between indepentent and dependent

variable - External, e.g. between the sample and the

population - Discriminate, between this and other problem

descriptions - Convergence, do the operational question cover

the problem description? - others .

- Reliability questions

- inner strength of the measurement, i.e.

- Xobs xtrueerrsysterrrandom

- to ensure good reliability retest, split-half

etc.

25

Operationalization

Problem desciption

.. n1

. n1

Aspect -1

.. - n

Aspect -2

Aspect -3

Measurable variable -1

. -n

Measurable variable -3

Measurable variable -2

- All aspects covered? (Convergence validity, i.e.

do the op.var. converge to the problem

description) - Measures the probem description, and nothing

else? (Discriminate validity)

26

Operationalization

Problem 2

Problem 1

.. n1

. n1

Aspect -1

.. - n

Aspect -2

Aspect -3

Measurable variable -1

. -n

Measurable variable -3

Measurable variable -2

- All aspects covered? (Convergence validity, i.e.

do the op.var. converge to the problem

description) - Measures the probem description, and nothing

else? (Discriminate validity)

27

Some data collection methods

qualitative

- Case studies

- Diary studies

- Constrained tasks (activities),

quasi-experiments, field experiments - Document studies

- Observations (results may be treated qualitative

or quantitative) - Survey research and questionnaries (paper,

online, telephone ) - Protocol analysis

- search for occurences of predefined cathegories

- search for patterns

- Automatic logging

- Controlled experiments

- ideally randomized and and double-blind, but

very often not acievable - between subjects or within subjects, repeated or

not repeated

quantitative

28

Method triangularisation

- Stereo view

- Combining different types of methods, e.g.

- both qualitative and quantitative methods

- Gives better validity of the total study

29

Some simple quantitative data analysis methods

- Average, standard deviation (measuring degree of

variance of the data) - Linear regression

- R 1 strong posistive

- R 0 no correlation

- R -1 strong negative

- Note correlation doesnot mean

cause-and-effect! - ?2-tests, other hypotesistesting techniques

- Testing against differentdistributions, e.g.

normal,binominal, etc.

30

Watch out for hidden connections !

- Normal cause effect

- Indirect cause effect (A often more general)

- Spurious association between B and C. B and C

correlate, but are not associated (e.g.

ice-cream and crimes)

B

A

A

B

C

B

A

C

31

Generalization

- Population

Sample

What can be concluded about all of them?

When investigating these

- Sample size

- Representativeness

- Confidence interval (with 95 probablity, the

correct result is between x and y).

32

Be aware of

- Law issues

- Ethical issues

- Antropology

- Psychological issues (both for observer and

respondent) - Language issues (e.g. clear and unbiased

formualtions)

33

Overview

- Qualitative

- Open

- Generating Giving evidence

- hypothesis

- Few cases

- Unique cases

- In depth

- Many variables

- Quantitative

- Closed

- Testing hypothesis

- Many cases

- Equal test situation

- Few aspects

- Isolation of variables

Many of you will probably be somewhere in the

middle

34

Some advice

- A combination of methods will often be valuable

- Some closed ranking questions (e.g. 1 5)

- Your own comments on the topic

- Open questions, other comments

- Be avare of one-sided vs. two-sided questions

- Be proud, but humle

35

Summing up

- There are a lot of different research techiques

- Be consious about choice of method(s)