Project : Phase 1 Grading PowerPoint PPT Presentation

1 / 19

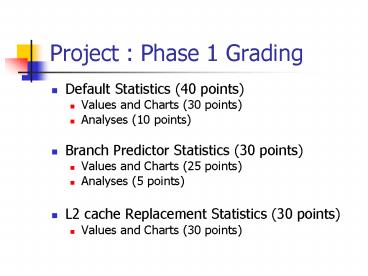

Title: Project : Phase 1 Grading

1

Project Phase 1 Grading

- Default Statistics (40 points)

- Values and Charts (30 points)

- Analyses (10 points)

- Branch Predictor Statistics (30 points)

- Values and Charts (25 points)

- Analyses (5 points)

- L2 cache Replacement Statistics (30 points)

- Values and Charts (30 points)

2

Default Statistics Analyses

- CPI affected by

- Percentage of branches, predictability of

branches - Cache hit rates

- Parallelism inherent in programs

- CPI of cc and go higher than others

- Larger percentage of tough to predict branches

- cc 17 branches abt 12 of which is

miss-predicted - Go 13 branches abt 20 of which is

miss-predicted - CPI of cc higher than go

- L1 miss rate of cc (2.6) is higher than go (0.6)

3

Default Statistics Analyses

- Compress has high miss rates

- Smaller execution run compulsory misses

- L2 miss rate of anagram high

- Very few L2 accesses compulsory misses

- Program based analyses

- Gcc has lot of branches

- Go program has small memory footprint

- Anagram is a simple program

- Compress input file only 20 bytes

- Note All are integer programs

- CPI lt 1, multiple issue, out of order

4

Branch Predictor Statistics

- Perfect gt Bimodal gt taken not-taken

- Variation across benchmarks (2 points)

- Go and cc show greatest variation

- They have significant number of tough to predict

branches.

5

L2 replacement policies

- No great change in miss-rate or CPI

- 30 points for the values and plots

- L1 cache was big so very few L2 accesses

- Associativity of L2 cache was small

- LRU gt FIFO gt Random

6

Distribution

- 90 100

7

Phase 2 Profile guided OPT

- Profiling Run

- Run un-optimized code with sample inputs

- Instrument code to collect information about the

run - Callgraph frequencies

- Basicblock frequencies

- Recompile

- Use collected information to produce better code

- Inlining

- Put hot code together to improve I

8

Phase 2 Compiler branch hints

- if (error) // not-taken

- Compiler provides hints about branches

taken/not-taken using profile information - In this question

- Learn to use simulator as a profiler

- Learn to estimate benefits of optimizations.

9

Example

- Simple loop

- 1000

- 1004

- // mostly not taken

- 1008 jz 1020

- 1012 jmp 1000

- For each branch mark taken or not-taken

- Taken gt 50

- Mark taken

- Not-taken gt 50

- Mark Not-taken

- In the above example

- 1008 not-taken

- 1032 not-taken

- 1064 taken

10

Profiling Run

- For each static branch instruction

- Collect execution frequency

- Percentage taken/not-taken

- Modify bpred_update function in bpred.c

- Maintain data structure for each branch

instruction indexed by instruction address - Maintain frequency, taken information

- Dump this information in the end.

11

Analysis

- From the information collected

- If branch is taken gt 50 of time, mark taken

- Otherwise not-taken

- Remember the instruction addresses and the hint.

12

Performance Estimation

- For all branches

- Predict taken/ not-taken according to the hint

- You may want to load all the hints into a data

structure at the start. - Data structure similar to one used for profiling.

- Indexed by branch instruction address.

- Estimate new CPI

- Notes

- Sufficient to do this for cc and anagram.

- After modifying SimpleScalar need to make !!!

13

Phase2 L2 replacement policy

- LRU policy

- Works well

- HW complexity is high

- Number of status bits to track when each block in

a set is last accessed - This number increases with associativity.

- PLRU

- Pseudo LRU policies

- Simpler replacement policy that attempts to mimic

LRU.

14

Tree based PLRU policy

- For a n way cache, there are nway -1 binary

decision bits - Let us consider a 4 way set associative cache

- L0, L1, L2 and L3 are the blocks in the set

- B0, B1 and B2 are decision bits

15

Tree based LRU for 4 way

16

Notes

- Use a 4K direct mapped L1 cache

- Hopefully this should lead to L2 accesses!

- Use a 16 way 256 KB L2 cache

- Hopefully enough ways to make a difference!

- Compare PLRU with LRU, FIFO and Random

- Sufficient to do this experiment for cc and

anagram!

17

Perfect Mem Disambiguation

- Memory Disambiguation

- Techniques employed by processor to execute

loads/stores out of order - Use a HW structure called Load/Store queue

- Tracks addresses / values of loads and stores

- Load can be issued from LSQ

- If there are no prior stores writing to the same

address. - If address in unknown, then cant issue load

- Perfect Disambiguation

- All addresses are known

18

How are addresses known

- Two ways to do this

- Trace based Run once and collect and remember

all the addresses - All registers values are actually known to the

simulator through functional simulation - Even though a register is yet to be computed,

the simulator knows the value - Look at lsq_refresh() function in sim-outorder.c

- To give you flexibility to do both ways

- Simulate only a million instructions

- Fast forward 100 million instructions

19

Mem Disambiguation

- Compare CPI with and without perfect

disambiguation - Sufficient to do this for cc and go

- -fastfwd 100 million instructions

- Simulate for additional 1 million instructions