Four Steps of Speculative Tomasulo Algorithm PowerPoint PPT Presentation

Title: Four Steps of Speculative Tomasulo Algorithm

1

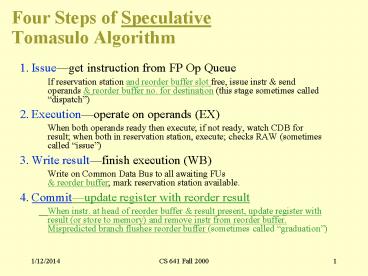

Four Steps of Speculative Tomasulo Algorithm

- 1. Issueget instruction from FP Op Queue

- If reservation station and reorder buffer slot

free, issue instr send operands reorder

buffer no. for destination (this stage sometimes

called dispatch) - 2. Executionoperate on operands (EX)

- When both operands ready then execute if not

ready, watch CDB for result when both in

reservation station, execute checks RAW

(sometimes called issue) - 3. Write resultfinish execution (WB)

- Write on Common Data Bus to all awaiting FUs

reorder buffer mark reservation station

available. - 4. Commitupdate register with reorder result

- When instr. at head of reorder buffer result

present, update register with result (or store to

memory) and remove instr from reorder buffer.

Mispredicted branch flushes reorder buffer

(sometimes called graduation)

2

Renaming Registers

- Common variation of speculative design

- Reorder buffer keeps instruction information but

not the result - Extend register file with extra renaming

registers to hold speculative results - Rename register allocated at issue result into

rename register on execution complete rename

register into real register on commit - Operands read either from register file (real or

speculative) or via Common Data Bus - Advantage operands are always from single source

(extended register file)

3

21164

4

Dynamic Scheduling in PowerPC 604 and Pentium Pro

- Parameter PPC PPro

- Max. instructions issued/clock 4 3

- Max. instr. complete exec./clock 6 5

- Max. instr. commited/clock 6 3

- Window (Instrs in reorder buffer) 16 40

- Number of reservations stations 12 20

- Number of rename registers 8int/12FP 40

- No. integer functional units (FUs) 2 2No.

floating point FUs 1 1 No. branch FUs 1 1 No.

complex integer FUs 1 0No. memory FUs 1 1 load

1 store

Q How pipeline 1 to 17 byte x86 instructions?

5

(No Transcript)

6

Dynamic Scheduling in Pentium Pro

- PPro doesnt pipeline 80x86 instructions

- PPro decode unit translates the Intel

instructions into 72-bit micro-operations ( DLX) - Sends micro-operations to reorder buffer

reservation stations - Takes 1 clock cycle to determine length of 80x86

instructions 2 more to create the

micro-operations - 12-14 clocks in total pipeline ( 3 state

machines) - Many instructions translate to 1 to 4

micro-operations - Complex 80x86 instructions are executed by a

conventional microprogram (8K x 72 bits) that

issues long sequences of micro-operations

7

Getting CPI lt 1 IssuingMultiple

Instructions/Cycle

- Two variations

- Superscalar varying no. instructions/cycle (1 to

8), scheduled by compiler or by HW (Tomasulo) - IBM PowerPC, Sun UltraSparc, DEC Alpha, HP 8000

- (Very) Long Instruction Words (V)LIW fixed

number of instructions (4-16) scheduled by the

compiler put ops into wide templates - Joint HP/Intel agreement in 1999/2000?

- Intel Architecture-64 (IA-64) 64-bit address

- Style Explicitly Parallel Instruction Computer

(EPIC) - Anticipated success lead to use of Instructions

Per Clock cycle (IPC) vs. CPI

8

Getting CPI lt 1 IssuingMultiple

Instructions/Cycle

- Superscalar DLX 2 instructions, 1 FP 1

anything else - Fetch 64-bits/clock cycle Int on left, FP on

right - Can only issue 2nd instruction if 1st

instruction issues - More ports for FP registers to do FP load FP

op in a pair - Type Pipe Stages

- Int. instruction IF ID EX MEM WB

- FP instruction IF ID EX MEM WB

- Int. instruction IF ID EX MEM WB

- FP instruction IF ID EX MEM WB

- Int. instruction IF ID EX MEM WB

- FP instruction IF ID EX MEM WB

- 1 cycle load delay expands to 3 instructions in

SS - instruction in right half cant use it, nor

instructions in next slot

9

Review Unrolled Loop that Minimizes Stalls for

Scalar

1 Loop LD F0,0(R1) 2 LD F6,-8(R1) 3 LD F10,-16(R1

) 4 LD F14,-24(R1) 5 ADDD F4,F0,F2 6 ADDD F8,F6,F2

7 ADDD F12,F10,F2 8 ADDD F16,F14,F2 9 SD 0(R1),F4

10 SD -8(R1),F8 11 SD -16(R1),F12 12 SUBI R1,R1,

32 13 BNEZ R1,LOOP 14 SD 8(R1),F16 8-32

-24 14 clock cycles, or 3.5 per iteration

LD to ADDD 1 Cycle ADDD to SD 2 Cycles

10

Loop Unrolling in Superscalar

- Integer instruction FP instruction Clock cycle

- Loop LD F0,0(R1) 1

- LD F6,-8(R1) 2

- LD F10,-16(R1) ADDD F4,F0,F2 3

- LD F14,-24(R1) ADDD F8,F6,F2 4

- LD F18,-32(R1) ADDD F12,F10,F2 5

- SD 0(R1),F4 ADDD F16,F14,F2 6

- SD -8(R1),F8 ADDD F20,F18,F2 7

- SD -16(R1),F12 8

- SD -24(R1),F16 9

- SUBI R1,R1,40 10

- BNEZ R1,LOOP 11

- SD -32(R1),F20 12

- Unrolled 5 times to avoid delays (1 due to SS)

- 12 clocks, or 2.4 clocks per iteration (1.5X)

11

Multiple Issue Challenges

- While Integer/FP split is simple for the HW, get

CPI of 0.5 only for programs with - Exactly 50 FP operations

- No hazards

- If more instructions issue at same time, greater

difficulty of decode and issue - Even 2-scalar gt examine 2 opcodes, 6 register

specifiers, decide if 1 or 2 instructions can

issue - VLIW tradeoff instruction space for simple

decoding - The long instruction word has room for many

operations - By definition, all the operations the compiler

puts in the long instruction word are independent

gt execute in parallel - E.g., 2 integer operations, 2 FP ops, 2 Memory

refs, 1 branch - 16 to 24 bits per field gt 716 or 112 bits to

724 or 168 bits wide - Need compiling technique that schedules across

several branches

12

Loop Unrolling in VLIW

- Memory Memory FP FP Int. op/ Clockreference

1 reference 2 operation 1 op. 2 branch - LD F0,0(R1) LD F6,-8(R1) 1

- LD F10,-16(R1) LD F14,-24(R1) 2

- LD F18,-32(R1) LD F22,-40(R1) ADDD F4,F0,F2 ADDD

F8,F6,F2 3 - LD F26,-48(R1) ADDD F12,F10,F2 ADDD F16,F14,F2 4

- ADDD F20,F18,F2 ADDD F24,F22,F2 5

- SD 0(R1),F4 SD -8(R1),F8 ADDD F28,F26,F2 6

- SD -16(R1),F12 SD -24(R1),F16 7

- SD -32(R1),F20 SD -40(R1),F24 SUBI R1,R1,48 8

- SD -0(R1),F28 BNEZ R1,LOOP 9

- Unrolled 7 times to avoid delays

- 7 results in 9 clocks, or 1.3 clocks per

iteration (1.8X) - Average 2.5 ops per clock, 50 efficiency

- Note Need more registers in VLIW (15 vs. 6 in

SS)

13

Advantages of HW (Tomasulo) vs. SW (VLIW)

Speculation

- HW determines address conflicts

- HW better branch prediction

- HW maintains precise exception model

- HW does not execute bookkeeping instructions

- Works across multiple implementations

- SW speculation is much easier for HW design

14

Superscalar v. VLIW

- Smaller code size

- Binary compatability across generations of

hardware

- Simplified Hardware for decoding, issuing

instructions - No Interlock Hardware (compiler checks?)

- More registers, but simplified Hardware for

Register Ports (multiple independent register

files?)

15

Intel/HP Explicitly Parallel Instruction

Computer (EPIC)

- 3 Instructions in 128 bit groups field

determines if instructions dependent or

independent - Smaller code size than old VLIW, larger than

x86/RISC - Groups can be linked to show independence gt 3

instr - 64 integer registers 64 floating point

registers - Not separate filesper funcitonal unit as in old

VLIW - Hardware checks dependencies (interlocks gt

binary compatibility over time) - Predicated execution (select 1 out of 64 1-bit

flags) gt 40 fewer mispredictions? - IA-64 name of instruction set architecture

EPIC is type - Merced is name of first implementation

16

Dynamic Scheduling in Superscalar

- Dependencies stop instruction issue

- Code compiler for old version will run poorly on

newest version - May want code to vary depending on how superscalar

17

Dynamic Scheduling in Superscalar

- How to issue two instructions and keep in-order

instruction issue for Tomasulo? - Assume 1 integer 1 floating point

- 1 Tomasulo control for integer, 1 for floating

point - Issue 2X Clock Rate, so that issue remains in

order - Only FP loads might cause dependency between

integer and FP issue - Replace load reservation station with a load

queue operands must be read in the order they

are fetched - Load checks addresses in Store Queue to avoid RAW

violation - Store checks addresses in Load Queue to avoid

WAR,WAW - Called decoupled architecture

18

Performance of Dynamic SS

- Iteration Instructions Issues Executes Writes

result - no.

clock-cycle number - 1 LD F0,0(R1) 1 2 4

- 1 ADDD F4,F0,F2 1 5 8

- 1 SD 0(R1),F4 2 9

- 1 SUBI R1,R1,8 3 4 5

- 1 BNEZ R1,LOOP 4 5

- 2 LD F0,0(R1) 5 6 8

- 2 ADDD F4,F0,F2 5 9 12

- 2 SD 0(R1),F4 6 13

- 2 SUBI R1,R1,8 7 8 9

- 2 BNEZ R1,LOOP 8 9

- 4 clocks per iteration only 1 FP

instr/iteration - Branches, Decrements issues still take 1 clock

cycle - How get more performance?

19

Limits to Multi-Issue Machines

- Inherent limitations of ILP

- 1 branch in 5 How to keep a 5-way VLIW busy?

- Latencies of units many operations must be

scheduled - Need about Pipeline Depth x No. Functional Units

of independent operations to keep machines busy,

e.g. 5 x 4 1520 independent instructions? - Difficulties in building HW

- Easy More instruction bandwidth

- Easy Duplicate FUs to get parallel execution

- Hard Increase ports to Register File (bandwidth)

- VLIW example needs 7 read and 3 write for Int.

Reg. 5 read and 3 write for FP reg - Harder Increase ports to memory (bandwidth)

- Decoding Superscalar and impact on clock rate,

pipeline depth?

20

Limits to Multi-Issue Machines

- Limitations specific to either Superscalar or

VLIW implementation - Decode issue in Superscalar how wide practical?

- VLIW code size unroll loops wasted fields in

VLIW - IA-64 compresses dependent instructions, but

still larger - VLIW lock step gt 1 hazard all instructions

stall - IA-64 not lock step? Dynamic pipeline?

- VLIW binary compatibility is practical

weakness as vary number FU and latencies over

time - IA-64 promises binary compatibility

21

Limits to ILP

- Conflicting studies of amount of parallelism

available in late 1980s and early 1990s.

Different assumptions about - Benchmarks (vectorized Fortran FP vs. integer C

programs) - Hardware sophistication

- Compiler sophistication

- How much ILP is available using existing

mechanims with increasing HW budgets? - Do we need to invent new HW/SW mechanisms to keep

on processor performance curve?

22

Limits to ILP

- Initial HW Model here MIPS compilers.

- Assumptions for ideal/perfect machine to start

- 1. Register renaminginfinite virtual registers

and all WAW WAR hazards are avoided - 2. Branch predictionperfect no mispredictions

- 3. Jump predictionall jumps perfectly predicted

gt machine with perfect speculation an

unbounded buffer of instructions available - 4. Memory-address alias analysisaddresses are

known a store can be moved before a load

provided addresses not equal - 1 cycle latency for all instructions unlimited

number of instructions issued per clock cycle

23

Upper Limit to ILP Ideal Machine(Figure 4.38,

page 319)

FP 75 - 150

Integer 18 - 60

IPC

24

More Realistic HW Branch ImpactFigure 4.40,

Page 323

- Change from Infinite window to examine to 2000

and maximum issue of 64 instructions per clock

cycle

FP 15 - 45

Integer 6 - 12

IPC

Profile

BHT (512)

Pick Cor. or BHT

Perfect

No prediction

25

More Realistic HW Register ImpactFigure 4.44,

Page 328

FP 11 - 45

- Change 2000 instr window, 64 instr issue, 8K 2

level Prediction

Integer 5 - 15

IPC

64

None

256

Infinite

32

128

26

More Realistic HW Alias ImpactFigure 4.46, Page

330

- Change 2000 instr window, 64 instr issue, 8K 2

level Prediction, 256 renaming registers

FP 4 - 45 (Fortran, no heap)

Integer 4 - 9

IPC

None

Global/Stack perfheap conflicts

Perfect

Inspec.Assem.

27

Realistic HW for 9X Window Impact(Figure 4.48,

Page 332)

- Perfect disambiguation (HW), 1K Selective

Prediction, 16 entry return, 64 registers, issue

as many as window

FP 8 - 45

IPC

Integer 6 - 12

64

16

256

Infinite

32

128

8

4

28

Braniac vs. Speed Demon(1993)

- 8-scalar IBM Power-2 _at_ 71.5 MHz (5 stage pipe)

vs. 2-scalar Alpha _at_ 200 MHz (7 stage pipe)

29

3 1996 Era Machines

- Alpha 21164 PPro HP PA-8000

- Year 1995 1995 1996

- Clock 400 MHz 200 MHz 180 MHz

- Cache 8K/8K/96K/2M 8K/8K/0.5M 0/0/2M

- Issue rate 2int2FP 3 instr (x86) 4 instr

- Pipe stages 7-9 12-14 7-9

- Out-of-Order 6 loads 40 instr (µop) 56 instr

- Rename regs none 40 56

30

SPECint95base Performance (July 1996)

31

SPECfp95base Performance (July 1996)

32

3 1997 Era Machines

- Alpha 21164 Pentium II HP PA-8000

- Year 1995 1996 1996

- Clock 600 MHz (97) 300 MHz (97) 236 MHz (97)

- Cache 8K/8K/96K/2M 16K/16K/0.5M 0/0/4M

- Issue rate 2int2FP 3 instr (x86) 4 instr

- Pipe stages 7-9 12-14 7-9

- Out-of-Order 6 loads 40 instr (µop) 56 instr

- Rename regs none 40 56

33

SPECint95base Performance (Oct. 1997)

34

SPECfp95base Performance (Oct. 1997)

35

Summary

- Branch Prediction

- Branch History Table 2 bits for loop accuracy

- Recently executed branches correlated with next

branch? - Branch Target Buffer include branch address

prediction - Predicated Execution can reduce number of

branches, number of mispredicted branches - Speculation Out-of-order execution, In-order

commit (reorder buffer) - SW Pipelining

- Symbolic Loop Unrolling to get most from pipeline

with little code expansion, little overhead - Superscalar and VLIW CPI lt 1 (IPC gt 1)

- Dynamic issue vs. Static issue

- More instructions issue at same time gt larger

hazard penalty

36

CHAPTER 5 MEMORY

37

Who Cares About the Memory Hierarchy?

- Processor Only Thus Far in Course

- CPU cost/performance, ISA, Pipelined Execution

- CPU-DRAM Gap

- 1980 no cache in µproc 1995 2-level cache on

chip(1989 first Intel µproc with a cache on chip)

µProc 60/yr.

1000

CPU

Moores Law

100

Processor-Memory Performance Gap(grows 50 /

year)

Performance

10

DRAM 7/yr.

DRAM

1

1980

1981

1983

1984

1985

1986

1987

1988

1989

1990

1991

1992

1993

1994

1995

1996

1997

1998

1999

2000

1982

38

Processor-Memory Performance Gap Tax

- Processor Area Transistors

- (cost) (power)

- Alpha 21164 37 77

- StrongArm SA110 61 94

- Pentium Pro 64 88

- 2 dies per package Proc/I/D L2

- Caches have no inherent value, only try to close

performance gap

39

Generations of Microprocessors

- Time of a full cache miss in instructions

executed - 1st Alpha (7000) 340 ns/5.0 ns 68 clks x 2

or 136 - 2nd Alpha (8400) 266 ns/3.3 ns 80 clks x 4

or 320 - 3rd Alpha (t.b.d.) 180 ns/1.7 ns 108 clks x 6

or 648 - 1/2X latency x 3X clock rate x 3X Instr/clock Þ

5X

40

Levels of the Memory Hierarchy

Upper Level

Capacity Access Time Cost

Staging Xfer Unit

faster

CPU Registers 100s Bytes lt10s ns

Registers

prog./compiler 1-8 bytes

Instr. Operands

Cache K Bytes 10-100 ns 1-0.1 cents/bit

Cache

cache cntl 8-128 bytes

Blocks

Main Memory M Bytes 200ns- 500ns .0001-.00001

cents /bit

Memory

OS 512-4K bytes

Pages

Disk G Bytes, 10 ms (10,000,000 ns) 10 - 10

cents/bit

Disk

-6

-5

user/operator Mbytes

Files

Larger

Tape infinite sec-min 10

Tape

Lower Level

-8

41

The Principle of Locality

- The Principle of Locality

- Program access a relatively small portion of the

address space at any instant of time. - Two Different Types of Locality

- Temporal Locality (Locality in Time) If an item

is referenced, it will tend to be referenced

again soon (e.g., loops, reuse) - Spatial Locality (Locality in Space) If an item

is referenced, items whose addresses are close by

tend to be referenced soon (e.g., straightline

code, array access) - Last 15 years, HW relied on localilty for speed

42

Memory Hierarchy Terminology

- Hit data appears in some block in the upper

level (example Block X) - Hit Rate the fraction of memory access found in

the upper level - Hit Time Time to access the upper level which

consists of - RAM access time Time to determine hit/miss

- Miss data needs to be retrieve from a block in

the lower level (Block Y) - Miss Rate 1 - (Hit Rate)

- Miss Penalty Time to replace a block in the

upper level - Time to deliver the block the processor

- Hit Time ltlt Miss Penalty (500 instructions on

21264!)

Lower Level Memory

Upper Level Memory

To Processor

Blk X

From Processor

Blk Y

43

Cache Measures

- Hit rate fraction found in that level

- So high that usually talk about Miss rate

- Miss rate fallacy as MIPS to CPU performance,

miss rate to average memory access time in

memory - Average memory-access time Hit time Miss

rate x Miss penalty (ns or clocks) - Miss penalty time to replace a block from lower

level, including time to replace in CPU - access time time to lower level

- f(latency to lower level)

- transfer time time to transfer block

- f(BW between upper lower levels)

44

Simplest Cache Direct Mapped

Memory Address

Memory

0

4 Byte Direct Mapped Cache

1

Cache Index

2

0

3

1

4

2

5

3

6

- Location 0 can be occupied by data from

- Memory location 0, 4, 8, ... etc.

- In general any memory locationwhose 2 LSBs of

the address are 0s - Addresslt10gt gt cache index

- Which one should we place in the cache?

- How can we tell which one is in the cache?

7

8

9

A

B

C

D

E

F

45

1 KB Direct Mapped Cache, 32B blocks

- For a 2 N byte cache

- The uppermost (32 - N) bits are always the Cache

Tag - The lowest M bits are the Byte Select (Block Size

2 M)

0

4

31

9

Cache Index

Cache Tag

Example 0x50

Byte Select

Ex 0x01

Ex 0x00

Stored as part of the cache state

Cache Data

Valid Bit

Cache Tag

0

Byte 0

Byte 1

Byte 31

1

0x50

Byte 32

Byte 33

Byte 63

2

3

31

Byte 992

Byte 1023

46

Two-way Set Associative Cache

- N-way set associative N entries for each Cache

Index - N direct mapped caches operates in parallel (N

typically 2 to 4) - Example Two-way set associative cache

- Cache Index selects a set from the cache

- The two tags in the set are compared in parallel

- Data is selected based on the tag result

Cache Index

Cache Data

Cache Tag

Valid

Cache Block 0

Adr Tag

Compare

0

1

Mux

Sel1

Sel0

OR

Cache Block

Hit

47

Disadvantage of Set Associative Cache

- N-way Set Associative Cache v. Direct Mapped

Cache - N comparators vs. 1

- Extra MUX delay for the data

- Data comes AFTER Hit/Miss

- In a direct mapped cache, Cache Block is

available BEFORE Hit/Miss - Possible to assume a hit and continue. Recover

later if miss.

48

4 Questions for Memory Hierarchy

- Q1 Where can a block be placed in the upper

level? (Block placement) - Q2 How is a block found if it is in the upper

level? (Block identification) - Q3 Which block should be replaced on a miss?

(Block replacement) - Q4 What happens on a write? (Write strategy)

49

Q1 Where can a block be placed in the upper

level?

- Block 12 placed in 8 block cache

- Fully associative, direct mapped, 2-way set

associative - S.A. Mapping Block Number Modulo Number Sets

Memory

50

Q2 How is a block found if it is in the upper

level?

- Tag on each block

- No need to check index or block offset

- Increasing associatively shrinks index, expands

tag

51

Q3 Which block should be replaced on a miss?

- Easy for Direct Mapped

- Set Associative or Fully Associative

- Random

- LRU (Least Recently Used)

- Associativity 2-way 4-way 8-way

- Size LRU Random LRU Random LRU Random

- 16 KB 5.2 5.7 4.7 5.3 4.4 5.0

- 64 KB 1.9 2.0 1.5 1.7 1.4 1.5

- 256 KB 1.15 1.17 1.13 1.13 1.12 1.12

52

Q4 What happens on a write?

- Write throughThe information is written to both

the block in the cache and to the block in the

lower-level memory. - Write backThe information is written only to the

block in the cache. The modified cache block is

written to main memory only when it is replaced. - is block clean or dirty?

- Pros and Cons of each?

- WT read misses cannot result in writes

- WB no repeated writes to same location

- WT always combined with write buffers so that

dont wait for lower level memory

53

Write Buffer for Write Through

Cache

Processor

DRAM

Write Buffer

- A Write Buffer is needed between the Cache and

Memory - Processor writes data into the cache and the

write buffer - Memory controller write contents of the buffer

to memory - Write buffer is just a FIFO

- Typical number of entries 4

- Works fine if Store frequency (w.r.t. time) ltlt

1 / DRAM write cycle - Memory system designers nightmare

- Store frequency (w.r.t. time) -gt 1 / DRAM

write cycle - Write buffer saturation

54

Impact of Memory Hierarchy on Algorithms

- Today CPU time is a function of (ops, cache

misses) vs. just f(ops)What does this mean to

Compilers, Data structures, Algorithms? - The Influence of Caches on the Performance of

Sorting by A. LaMarca and R.E. Ladner.

Proceedings of the Eighth Annual ACM-SIAM

Symposium on Discrete Algorithms, January, 1997,

370-379. - Quicksort fastest comparison based sorting

algorithm when all keys fit in memory - Radix sort also called linear time sort

because for keys of fixed length and fixed radix

a constant number of passes over the data is

sufficient independent of the number of keys - For Alphastation 250, 32 byte blocks, direct

mapped L2 2MB cache, 8 byte keys, from 4000 to

4000000

55

Quicksort vs. Radix as vary number keys

Instructions

Radix sort

Quick sort

Instructions/key

Set size in keys

56

Quicksort vs. Radix as vary number keys Instrs

Time

Radix sort

Time

Quick sort

Instructions

Set size in keys

57

Quicksort vs. Radix as vary number keys Cache

misses

Radix sort

Cache misses

Quick sort

Set size in keys

What is proper approach to fast algorithms?

58

A Modern Memory Hierarchy

- By taking advantage of the principle of locality

- Present the user with as much memory as is

available in the cheapest technology. - Provide access at the speed offered by the

fastest technology.

Processor

Control

Tertiary Storage (Disk/Tape)

Secondary Storage (Disk)

Main Memory (DRAM)

Second Level Cache (SRAM)

On-Chip Cache

Datapath

Registers

1s

10,000,000s (10s ms)

Speed (ns)

10s

100s

10,000,000,000s (10s sec)

100s

Size (bytes)

Ks

Ms

Gs

Ts

59

Basic Issues in VM System Design

size of information blocks that are transferred

from secondary to main storage (M) block

of information brought into M, and M is full,

then some region of M must be released to

make room for the new block --gt replacement

policy which region of M is to hold the new

block --gt placement policy missing item

fetched from secondary memory only on the

occurrence of a fault --gt demand load

policy

disk

mem

cache

reg

pages

frame

Paging Organization virtual and physical address

space partitioned into blocks of equal size

page frames

pages

60

Address Map

V 0, 1, . . . , n - 1 virtual address

space M 0, 1, . . . , m - 1 physical address

space MAP V --gt M U 0 address mapping

function

n gt m

MAP(a) a' if data at virtual address a is

present in physical

address a' and a' in M 0 if

data at virtual address a is not present in M

a

missing item fault

Name Space V

fault handler

Processor

0

Secondary Memory

Addr Trans Mechanism

Main Memory

a

a'

physical address

OS performs this transfer

61

Paging Organization

V.A.

P.A.

unit of mapping

frame 0

0

1K

Addr Trans MAP

0

1K

page 0

1

1024

1K

1024

1

1K

also unit of transfer from virtual to physical

memory

7

1K

7168

Physical Memory

31

1K

31744

Virtual Memory

Address Mapping

10

VA

page no.

disp

Page Table

Page Table Base Reg

Access Rights

actually, concatenation is more likely

V

PA

index into page table

table located in physical memory

physical memory address

62

Virtual Address and a Cache

miss

VA

PA

Trans- lation

Cache

Main Memory

CPU

hit

data

It takes an extra memory access to translate VA

to PA This makes cache access very expensive,

and this is the "innermost loop" that you want

to go as fast as possible ASIDE Why access

cache with PA at all? VA caches have a problem!

synonym / alias problem two different

virtual addresses map to same physical

address gt two different cache entries holding

data for the same physical address!

for update must update all cache entries with

same physical address or memory becomes

inconsistent determining this requires

significant hardware, essentially an

associative lookup on the physical address tags

to see if you have multiple hits or

software enforced alias boundary same lsb of VA

PA gt cache size

63

TLBs

A way to speed up translation is to use a special

cache of recently used page table entries

-- this has many names, but the most

frequently used is Translation Lookaside Buffer

or TLB

Virtual Address Physical Address Dirty Ref

Valid Access

Really just a cache on the page table

mappings TLB access time comparable to cache

access time (much less than main memory

access time)

64

Translation Look-Aside Buffers

Just like any other cache, the TLB can be

organized as fully associative, set

associative, or direct mapped TLBs are usually

small, typically not more than 128 - 256 entries

even on high end machines. This permits

fully associative lookup on these machines.

Most mid-range machines use small n-way

set associative organizations.

hit

miss

VA

PA

TLB Lookup

Cache

Main Memory

CPU

Translation with a TLB

hit

miss

Trans- lation

data

t

20 t

1/2 t

65

Reducing Translation Time

- Machines with TLBs go one step further to reduce

cycles/cache access - They overlap the cache access with the TLB

access - high order bits of the VA are used to look in

the TLB while low order bits are used as index

into cache

66

Overlapped Cache TLB Access

Cache

TLB

index

assoc lookup

1 K

32

4 bytes

10

2

00

Hit/ Miss

PA

Data

PA

Hit/ Miss

12

20

page

disp

IF cache hit AND (cache tag PA) then deliver

data to CPU ELSE IF cache miss OR (cache tag

PA) and TLB hit THEN access

memory with the PA from the TLB ELSE do standard

VA translation

67

Problems With Overlapped TLB Access

Overlapped access only works as long as the

address bits used to index into the cache

do not change as the result of VA

translation This usually limits things to small

caches, large page sizes, or high n-way set

associative caches if you want a large

cache Example suppose everything the same

except that the cache is increased to 8 K

bytes instead of 4 K

11

2

cache index

00

This bit is changed by VA translation, but is

needed for cache lookup

12

20

virt page

disp

Solutions go to 8K byte page sizes

go to 2 way set associative cache or SW

guarantee VA13PA13

2 way set assoc cache

1K

10

4

4

68

Summary 1/4

- The Principle of Locality

- Program access a relatively small portion of the

address space at any instant of time. - Temporal Locality Locality in Time

- Spatial Locality Locality in Space

- Three Major Categories of Cache Misses

- Compulsory Misses sad facts of life. Example

cold start misses. - Capacity Misses increase cache size

- Conflict Misses increase cache size and/or

associativity. Nightmare Scenario ping pong

effect! - Write Policy

- Write Through needs a write buffer. Nightmare

WB saturation - Write Back control can be complex

69

Summary 2 / 4 The Cache Design Space

- Several interacting dimensions

- cache size

- block size

- associativity

- replacement policy

- write-through vs write-back

- write allocation

- The optimal choice is a compromise

- depends on access characteristics

- workload

- use (I-cache, D-cache, TLB)

- depends on technology / cost

- Simplicity often wins

Cache Size

Associativity

Block Size

Bad

Factor A

Factor B

Good

Less

More

70

Summary 3/4 TLB, Virtual Memory

- Caches, TLBs, Virtual Memory all understood by

examining how they deal with 4 questions 1)

Where can block be placed? 2) How is block found?

3) What block is repalced on miss? 4) How are

writes handled? - Page tables map virtual address to physical

address - TLBs are important for fast translation

- TLB misses are significant in processor

performance - funny times, as most systems cant access all of

2nd level cache without TLB misses!

71

Summary 4/4 Memory Hierachy

- VIrtual memory was controversial at the time

can SW automatically manage 64KB across many

programs? - 1000X DRAM growth removed the controversy

- Today VM allows many processes to share single

memory without having to swap all processes to

disk today VM protection is more important than

memory hierarchy - Today CPU time is a function of (ops, cache

misses) vs. just f(ops)What does this mean to

Compilers, Data structures, Algorithms?

72

Review Four Questions for Memory Hierarchy

Designers

- Q1 Where can a block be placed in the upper

level? (Block placement) - Fully Associative, Set Associative, Direct Mapped

- Q2 How is a block found if it is in the upper

level? (Block identification) - Tag/Block

- Q3 Which block should be replaced on a miss?

(Block replacement) - Random, LRU

- Q4 What happens on a write? (Write strategy)

- Write Back or Write Through (with Write Buffer)

73

Cache Performance

- CPU time (CPU execution clock cycles Memory

stall clock cycles) x clock cycle time - Memory stall clock cycles

- (Reads x Read miss rate x Read miss penalty

Writes x Write miss rate x Write miss penalty) - Memory stall clock cycles Memory accesses x

Miss rate x Miss penalty

74

Cache Performance

- CPUtime Instruction Count x (CPIexecution Mem

accesses per instruction x Miss rate x Miss

penalty) x Clock cycle time - Misses per instruction Memory accesses per

instruction x Miss rate - CPUtime IC x (CPIexecution Misses per

instruction x Miss penalty) x Clock cycle time

75

Improving Cache Performance

- 1. Reduce the miss rate,

- 2. Reduce the miss penalty, or

- 3. Reduce the time to hit in the cache.

76

Reducing Misses

- Classifying Misses 3 Cs

- CompulsoryThe first access to a block is not in

the cache, so the block must be brought into the

cache. Also called cold start misses or first

reference misses.(Misses in even an Infinite

Cache) - CapacityIf the cache cannot contain all the

blocks needed during execution of a program,

capacity misses will occur due to blocks being

discarded and later retrieved.(Misses in Fully

Associative Size X Cache) - ConflictIf block-placement strategy is set

associative or direct mapped, conflict misses (in

addition to compulsory capacity misses) will

occur because a block can be discarded and later

retrieved if too many blocks map to its set. Also

called collision misses or interference

misses.(Misses in N-way Associative, Size X

Cache)

77

3Cs Absolute Miss Rate (SPEC92)

Conflict

Compulsory vanishingly small

78

21 Cache Rule

miss rate 1-way associative cache size X

miss rate 2-way associative cache size X/2

Conflict

79

3Cs Relative Miss Rate

Conflict

Flaws for fixed block size Good insight gt

invention

80

How Can Reduce Misses?

- 3 Cs Compulsory, Capacity, Conflict

- In all cases, assume total cache size not

changed - What happens if

- 1) Change Block Size Which of 3Cs is obviously

affected? - 2) Change Associativity Which of 3Cs is

obviously affected? - 3) Change Compiler Which of 3Cs is obviously

affected?

81

1. Reduce Misses via Larger Block Size

82

2. Reduce Misses via Higher Associativity

- 21 Cache Rule

- Miss Rate DM cache size N Miss Rate 2-way cache

size N/2 - Beware Execution time is only final measure!

- Will Clock Cycle time increase?

- Hill 1988 suggested hit time for 2-way vs.

1-way external cache 10, internal 2

83

Example Avg. Memory Access Time vs. Miss Rate

- Example assume CCT 1.10 for 2-way, 1.12 for

4-way, 1.14 for 8-way vs. CCT direct mapped - Cache Size Associativity

- (KB) 1-way 2-way 4-way 8-way

- 1 2.33 2.15 2.07 2.01

- 2 1.98 1.86 1.76 1.68

- 4 1.72 1.67 1.61 1.53

- 8 1.46 1.48 1.47 1.43

- 16 1.29 1.32 1.32 1.32

- 32 1.20 1.24 1.25 1.27

- 64 1.14 1.20 1.21 1.23

- 128 1.10 1.17 1.18 1.20

84

3. Reducing Misses via aVictim Cache

- How to combine fast hit time of direct mapped

yet still avoid conflict misses? - Add buffer to place data discarded from cache

- Jouppi 1990 4-entry victim cache removed 20

to 95 of conflicts for a 4 KB direct mapped data

cache - Used in Alpha, HP machines

85

4. Reducing Misses via Pseudo-Associativity

- How to combine fast hit time of Direct Mapped and

have the lower conflict misses of 2-way SA cache?

- Divide cache on a miss, check other half of

cache to see if there, if so have a pseudo-hit

(slow hit) - Drawback CPU pipeline is hard if hit takes 1 or

2 cycles - Better for caches not tied directly to processor

(L2) - Used in MIPS R1000 L2 cache, similar in UltraSPARC

Hit Time

Miss Penalty

Pseudo Hit Time

Time

86

5. Reducing Misses by Hardware Prefetching of

Instructions Data

- E.g., Instruction Prefetching

- Alpha 21064 fetches 2 blocks on a miss

- Extra block placed in stream buffer

- On miss check stream buffer

- Works with data blocks too

- Jouppi 1990 1 data stream buffer got 25 misses

from 4KB cache 4 streams got 43 - Palacharla Kessler 1994 for scientific

programs for 8 streams got 50 to 70 of misses

from 2 64KB, 4-way set associative caches - Prefetching relies on having extra memory

bandwidth that can be used without penalty

87

6. Reducing Misses by Software Prefetching Data

- Data Prefetch

- Load data into register (HP PA-RISC loads)

- Cache Prefetch load into cache (MIPS IV,

PowerPC, SPARC v. 9) - Special prefetching instructions cannot cause

faultsa form of speculative execution - Issuing Prefetch Instructions takes time

- Is cost of prefetch issues lt savings in reduced

misses? - Higher superscalar reduces difficulty of issue

bandwidth

88

7. Reducing Misses by Compiler Optimizations

- McFarling 1989 reduced caches misses by 75 on

8KB direct mapped cache, 4 byte blocks in

software - Instructions

- Reorder procedures in memory so as to reduce

conflict misses - Profiling to look at conflicts(using tools they

developed) - Data

- Merging Arrays improve spatial locality by

single array of compound elements vs. 2 arrays - Loop Interchange change nesting of loops to

access data in order stored in memory - Loop Fusion Combine 2 independent loops that

have same looping and some variables overlap - Blocking Improve temporal locality by accessing

blocks of data repeatedly vs. going down whole

columns or rows

89

Merging Arrays Example

- / Before 2 sequential arrays /

- int valSIZE

- int keySIZE

- / After 1 array of stuctures /

- struct merge

- int val

- int key

- struct merge merged_arraySIZE

- Reducing conflicts between val key improve

spatial locality

90

Loop Interchange Example

- / Before /

- for (k 0 k lt 100 k k1)

- for (j 0 j lt 100 j j1)

- for (i 0 i lt 5000 i i1)

- xij 2 xij

- / After /

- for (k 0 k lt 100 k k1)

- for (i 0 i lt 5000 i i1)

- for (j 0 j lt 100 j j1)

- xij 2 xij

- Sequential accesses instead of striding through

memory every 100 words improved spatial locality

91

Loop Fusion Example

- / Before /

- for (i 0 i lt N i i1)

- for (j 0 j lt N j j1)

- aij 1/bij cij

- for (i 0 i lt N i i1)

- for (j 0 j lt N j j1)

- dij aij cij

- / After /

- for (i 0 i lt N i i1)

- for (j 0 j lt N j j1)

- aij 1/bij cij

- dij aij cij

- 2 misses per access to a c vs. one miss per

access improve spatial locality

92

Blocking Example

- / Before /

- for (i 0 i lt N i i1)

- for (j 0 j lt N j j1)

- r 0

- for (k 0 k lt N k k1)

- r r yikzkj

- xij r

- Two Inner Loops

- Read all NxN elements of z

- Read N elements of 1 row of y repeatedly

- Write N elements of 1 row of x

- Capacity Misses a function of N Cache Size

- 3 NxNx4 gt no capacity misses otherwise ...

- Idea compute on BxB submatrix that fits

93

Blocking Example

- / After /

- for (jj 0 jj lt N jj jjB)

- for (kk 0 kk lt N kk kkB)

- for (i 0 i lt N i i1)

- for (j jj j lt min(jjB-1,N) j j1)

- r 0

- for (k kk k lt min(kkB-1,N) k k1)

- r r yikzkj

- xij xij r

- B called Blocking Factor

- Capacity Misses from 2N3 N2 to 2N3/B N2

- Conflict Misses Too?

94

Reducing Conflict Misses by Blocking

- Conflict misses in caches not FA vs. Blocking

size - Lam et al 1991 a blocking factor of 24 had a

fifth the misses vs. 48 despite both fit in cache

95

Summary of Compiler Optimizations to Reduce Cache

Misses (by hand)

96

Summary

- 3 Cs Compulsory, Capacity, Conflict Misses

- Reducing Miss Rate

- 1. Reduce Misses via Larger Block Size

- 2. Reduce Misses via Higher Associativity

- 3. Reducing Misses via Victim Cache

- 4. Reducing Misses via Pseudo-Associativity

- 5. Reducing Misses by HW Prefetching Instr, Data

- 6. Reducing Misses by SW Prefetching Data

- 7. Reducing Misses by Compiler Optimizations

- Remember danger of concentrating on just one

parameter when evaluating performance

97

Review Improving Cache Performance

- 1. Reduce the miss rate,

- 2. Reduce the miss penalty, or

- 3. Reduce the time to hit in the cache.

98

1. Reducing Miss Penalty Read Priority over

Write on Miss

- Write through with write buffers offer RAW

conflicts with main memory reads on cache misses - If simply wait for write buffer to empty, might

increase read miss penalty (old MIPS 1000 by 50

) - Check write buffer contents before read if no

conflicts, let the memory access continue - Write Back?

- Read miss replacing dirty block

- Normal Write dirty block to memory, and then do

the read - Instead copy the dirty block to a write buffer,

then do the read, and then do the write - CPU stall less since restarts as soon as do read

99

2. Reduce Miss Penalty Subblock Placement

- Dont have to load full block on a miss

- Have valid bits per subblock to indicate valid

- (Originally invented to reduce tag storage)

Subblocks

Valid Bits

100

3. Reduce Miss Penalty Early Restart and

Critical Word First

- Dont wait for full block to be loaded before

restarting CPU - Early restartAs soon as the requested word of

the block arrives, send it to the CPU and let

the CPU continue execution - Critical Word FirstRequest the missed word first

from memory and send it to the CPU as soon as it

arrives let the CPU continue execution while

filling the rest of the words in the block. Also

called wrapped fetch and requested word first - Generally useful only in large blocks,

- Spatial locality a problem tend to want next

sequential word, so not clear if benefit by early

restart

block

101

4. Reduce Miss Penalty Non-blocking Caches to

reduce stalls on misses

- Non-blocking cache or lockup-free cache allow

data cache to continue to supply cache hits

during a miss - requires out-of-order executuion CPU

- hit under miss reduces the effective miss

penalty by working during miss vs. ignoring CPU

requests - hit under multiple miss or miss under miss

may further lower the effective miss penalty by

overlapping multiple misses - Significantly increases the complexity of the

cache controller as there can be multiple

outstanding memory accesses - Requires muliple memory banks (otherwise cannot

support) - Penium Pro allows 4 outstanding memory misses

102

Value of Hit Under Miss for SPEC

0-gt1 1-gt2 2-gt64 Base

Hit under n Misses

Integer

Floating Point

- FP programs on average AMAT 0.68 -gt 0.52 -gt

0.34 -gt 0.26 - Int programs on average AMAT 0.24 -gt 0.20 -gt

0.19 -gt 0.19 - 8 KB Data Cache, Direct Mapped, 32B block, 16

cycle miss

103

5th Miss Penalty Reduction Second Level Cache

- L2 Equations

- AMAT Hit TimeL1 Miss RateL1 x Miss

PenaltyL1 - Miss PenaltyL1 Hit TimeL2 Miss RateL2 x Miss

PenaltyL2 - AMAT Hit TimeL1 Miss RateL1 x (Hit TimeL2

Miss RateL2 Miss PenaltyL2) - Definitions

- Local miss rate misses in this cache divided by

the total number of memory accesses to this cache

(Miss rateL2) - Global miss ratemisses in this cache divided by

the total number of memory accesses generated by

the CPU (Miss RateL1 x Miss RateL2) - Global Miss Rate is what matters

104

Comparing Local and Global Miss Rates

- 32 KByte 1st level cacheIncreasing 2nd level

cache - Global miss rate close to single level cache rate

provided L2 gtgt L1 - Dont use local miss rate

- L2 not tied to CPU clock cycle!

- Cost A.M.A.T.

- Generally Fast Hit Times and fewer misses

- Since hits are few, target miss reduction

Linear

Cache Size

Log

Cache Size

105

Reducing Misses Which apply to L2 Cache?

- Reducing Miss Rate

- 1. Reduce Misses via Larger Block Size

- 2. Reduce Conflict Misses via Higher

Associativity - 3. Reducing Conflict Misses via Victim Cache

- 4. Reducing Conflict Misses via

Pseudo-Associativity - 5. Reducing Misses by HW Prefetching Instr, Data

- 6. Reducing Misses by SW Prefetching Data

- 7. Reducing Capacity/Conf. Misses by Compiler

Optimizations

106

L2 cache block size A.M.A.T.

- 32KB L1, 8 byte path to memory

107

Reducing Miss Penalty Summary

- Five techniques

- Read priority over write on miss

- Subblock placement

- Early Restart and Critical Word First on miss

- Non-blocking Caches (Hit under Miss, Miss under

Miss) - Second Level Cache

- Can be applied recursively to Multilevel Caches

- Danger is that time to DRAM will grow with

multiple levels in between - First attempts at L2 caches can make things

worse, since increased worst case is worse

108

What is the Impact of What Youve Learned About

Caches?

- 1960-1985 Speed ƒ(no. operations)

- 1990

- Pipelined Execution Fast Clock Rate

- Out-of-Order execution

- Superscalar Instruction Issue

- 1998 Speed ƒ(non-cached memory accesses)

- Superscalar, Out-of-Order machines hide L1 data

cache miss (5 clocks) but not L2 cache miss

(50 clocks)?