Chapter 3 : Memory Management, Recent Systems - PowerPoint PPT Presentation

1 / 72

Title:

Chapter 3 : Memory Management, Recent Systems

Description:

Page number = the integer quotient from the division of the job space address by ... does not eliminate, internal fragmentation. Understanding Operating Systems ... – PowerPoint PPT presentation

Number of Views:79

Avg rating:3.0/5.0

Title: Chapter 3 : Memory Management, Recent Systems

1

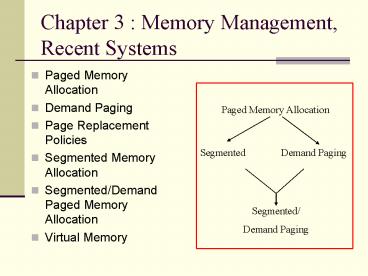

Chapter 3 Memory Management, Recent Systems

- Paged Memory Allocation

- Demand Paging

- Page Replacement Policies

- Segmented Memory Allocation

- Segmented/Demand Paged Memory Allocation

- Virtual Memory

2

Memory Management

- Early schemes were limited to storing entire

program in memory. - Fragmentation.

- Overhead due to relocation.

- More sophisticated memory schemes now that

- Eliminate need to store programs contiguously.

- Eliminate need for entire program to reside in

memory during execution.

Problems

3

More Recent Memory Management Schemes

- Paged Memory Allocation

- Demand Paging Memory Allocation

- Segmented Memory Allocation

- Segmented/Demand Paged Allocation

4

Paged Memory Allocation

- Divides each incoming job into pages of equal

size. - Works well if page size size of memory block

size (page frames) size of disk section

(sector, block).

5

Paged Memory Allocation (contd)

- Before executing a program, memory manager

- Determines number of pages in program.

- Locates enough empty page frames in main memory.

- Loads all of the programs pages into them.

6

Programs Are Split Into Equal-sized Pages (Figure

3.1)

7

Free Frames

8

Job 1 (Figure 3.1)

- At compilation time every job is divided into

pages - Page 0 contains the first hundred lines.

- Page 1 contains the second hundred lines.

- Page 2 contains the third hundred lines.

- Page 3 contains the last fifty lines.

- Program has 350 lines.

- Referred to by system as line 0 through line 349.

9

Paging Requires 3 Tables to Track a Jobs Pages

- Job Table (JT) - 2 entries for each active job.

- Size of job memory location of its page map

table. - Dynamic grows/shrinks as jobs loaded/completed.

- Page Map Table (PMT) - 1 entry per page.

- Page number corresponding page frame memory

address. - Page numbers are sequential (Page 0, Page 1 )

- Memory Map Table (MMT) - 1 entry for each page

frame. - Location free/busy status.

10

Job Table Contains 2 Entries for Each Active Job

(Table 3.1)

11

Job 1 Is 350 Lines Long Divided Into 4 Pages

(Figure 3.2)

12

Page Map Table for Job 1 in Figure 3.1

13

Displacement (Figure 3.2)

- Displacement (offset) of a line -- how far away

a line is from the beginning of its page. - Used to locate that line within its page frame.

- Relative factor.

- For example, lines 0, 100, 200, and 300 are first

lines for pages 0, 1, 2, and 3 respectively so

each has displacement of zero.

14

To Find the Address of a Given Program Line

- Divide the line number by the page size, keeping

the remainder as an integer. - Page number

- Page size line number to be located

- xxx

- xxx

- xxx

- Displacement

15

Example

- 100 lines per page

- To access line 214

- 214 100 2, ??? 14

- Page2, displacement 14

16

Questions and Answers

- Could the OS or the HW get a page number gt 3?

- No, not if the AP was written correctly

- If it did, what should the OS do?

- Send an error message and stop processing the

program. (The page is out of bounds.) - Could the OS get a remainder of more than 99?

- Not if it divides correctly.

- What is the smallest remainder possible?

- Zero

17

Address Resolution

- Each time and instruction is executed or a data

value is used, the OS or (hardware) must - Translate the job space address (relative, the

logical address). - Into a physical address (absolute).

18

Address Translation Architecture

19

Address Resolution Steps

- STEP 1

- Page number the integer quotient from the

division of the job space address by the page

size - Displacement the remainder from the page number

division - STEP 2

- Refer to the jobs PMT and find the corresponding

page frame number - STEP 3

- ADDR_PAGE_FRAME PAGE_FRAME_NUM PAGE_SIZE

- STEP 4

- INSTR_ADDR_IN_MEM ADDR_PAGE_FRAME DISPL

20

Example Job 1 with its Page Map Table (Fig. 3.3)

21

Example Job 1 with its Page Map Table (Fig. 3.3)

- Address resolution steps

- 518 512 1 ??? 6 gt Page1, displacement 6

- From PMT gt page fame number 3

- 3 512 1536

- 1536 6 1542 lt physical memory address

22

Pros Cons of Paging

- Allows jobs to be allocated in non-contiguous

memory locations. - Memory used more efficiently more jobs can fit.

- Size of page is crucial (not too small, not too

large). - Increased overhead occurs (address resolution)

- Reduces, but does not eliminate, internal

fragmentation.

23

Demand Paging

- Bring a page into memory only when it is needed

- Less I/O memory needed.

- Faster response.

24

Demand Paging (contd)

- Takes advantage that programs are written

sequentially so not all pages are necessary at

once. For example - User-written error handling modules.

- Mutually exclusive modules.

- Certain program options are either mutually

exclusive or not always accessible. - Many tables assigned fixed amount of address

space even though only a fraction of table is

actually used.

25

Demand Paging (contd)

- Demand paging made virtual memory widely

available. - Can give appearance of an almost-infinite or

nonfinite amount of physical memory. - Requires use of a high-speed direct access

storage device that can work directly with CPU.

26

Demand Paging (contd)

- How and when the pages are passed (or swapped)

depends on predefined policies that determine

when to make room for needed pages and how to do

so.

27

Tables in Demand Paging

- Job Table.

- Page Map Table (with 3 new fields).

- Determines if requested page is already in memory

(status bit) - Determines if page contents have been modified

(modified bit) - Determines if the page has been referenced

recently (reference bit) - Used to determine which pages should remain in

main memory and which should be swapped out. - Memory Map Table.

28

Page Map Table

29

Hardware Instruction Processing Algorithm

- Start processing instruction

- Generate data address

- Compute page number

- If page is in memory

- Then

- get data and finish instruction

- advance to next instruction

- return to step 1

- Else

- generate page interrupt

- call page fault handler

30

Page Fault

- Page fault a failure to find a page in memory.

- Page Fault Handler

- The routine that handles page fault

31

Page Fault Handler Algorithm

- If there is no free page frame

- Then

- Select page to be swapped out using page

removal algorithm - Update jobs page map table

- If content of page had been changed then

- Write page to disk

- End if

- End if

- 2. Use page number from step 3 from the Hardware

Instruction Processing Algorithm to get disk

address where the requested page is stored. - 3. Read page into memory.

- 4. Update jobs page map table.

- 5. Update memory map table.

- 6. Restart interrupted instruction.

32

Thrashing Is a Problem With Demand Paging

- Trashing an excessive amount of page swapping

back and forth between main memory and secondary

storage. - Operation becomes inefficient.

- Caused when a page is removed from memory but is

called back shortly thereafter. - Can occur across jobs, when a large number of

jobs are vying for a relatively few number of

free pages. - Can happen within a job (e.g., in loops that

cross page boundaries).

33

Trashing

34

Page Replacement Policies

- Policy that selects page to be removed is crucial

to system efficiency. - Selection of algorithm is critical.

- First-in first-out (FIFO) policy best page to

remove is one that has been in memory the

longest. - Least-recently-used (LRU) policy chooses pages

least recently accessed to be swapped out. - Most recently used (MRU) policy.

- Least frequently used (LFU) policy.

Most well known policies

35

FIFO policy. When program calls for Page C, Page

A is moved out of 1st page frame to make room for

it (solid lines). When Page A is needed again, it

replaces Page B in 2nd page frame (dotted lines).

36

How each page requested is swapped into 2

available page frames using FIFO. When program is

ready to be processed all 4 pages are on

secondary storage. Throughout program, 11 page

requests are issued. When program calls a page

that isnt already in memory, a page interrupt is

issued (shown by ). 9 page interrupts result.

37

FIFO

- High failure rate shown in previous example

caused by - limited amount of memory available.

- order in which pages are requested by program

(cant change). - There is no guarantee that buying more memory

will always result in better performance (FIFO

anomaly or Belady's anomaly). See exercise 6.

38

Beladys Anomaly

39

LRU Policy For program in Figure 3.8. Throughout

the program 11 page requests are issued, but they

cause only 8 page interrupts.

40

LRU

- The efficiency of LRU is only slightly better

than with FIFO. - LRU is a stack algorithm removal policy

increasing main memory causes either a decrease

in or same number of page interrupts. - LRU doesnt have same anomaly that FIFO does.

41

Use a Stack to Record the Most Recent Page

Reference

42

Mechanics of Paging Page Map Table

- Status bit indicates if page is currently in

memory or not. - Referenced bit indicates if page has been

referenced recently. - Used by LRU to determine which pages should be

swapped out. - Modified bit indicates if page contents have been

altered - Used to determine if page must be rewritten to

secondary storage when its swapped out.

43

Four Possible Combinations of Modified and

Referenced Bits

44

Global V.S. Local Replacement

- Global replacement

- Select a replacement frame from the set of all

frames - One process can take frames from others

- Local replacement

- Each process selects from only its own set of

allocated frames

45

Page Replacement The Working Set

- Working set set of pages residing in memory

that can be accessed directly without incurring a

page fault. - Improves performance of demand page schemes.

- Locality of reference occurs with well-structured

programs. - During any phase of its execution program

references only a small fraction of its pages.

46

Locality in a memory-reference pattern.

47

Page Replacement The Working Set

- System must decide

- How many pages comprise the working set?

- Whats the maximum number of pages the operating

system will allow for a working set?

48

(No Transcript)

49

Pros Cons of Demand Paging

- First scheme in which a job was no longer

constrained by the size of physical memory

(virtual memory). - Uses memory more efficiently than previous

schemes because sections of a job used seldom or

not at all arent loaded into memory unless

specifically requested. - Increased overhead caused by tables and page

interrupts.

50

Segmented Memory Allocation

- Based on common practice by programmers of

structuring their programs in modules (logical

groupings of code). - A segment is a logical unit such as main

program, subroutine, procedure, function, local

variables, global variables, common block, stack,

symbol table, or array.

51

Users View of a Program

52

Segmented Memory Allocation

- Main memory is not divided into page frames

because size of each segment is different. - Memory is allocated dynamically.

53

Logical View of Segments

54

Segment Map Table (SMT)

- When a program is compiled, segments are set up

according to programs structural modules. - Logical address format (Segment Number,

Displacement) - Each segment is numbered and a Segment Map Table

(SMT) is generated for each job. - Contains segment numbers, their lengths, access

rights, status, and (when each is loaded into

memory) its location in memory.

55

Tables Used in Segmentation

- Memory Manager needs to track segments in memory

- Job Table (JT) lists every job in process (one

for whole system). - Segment Map Table lists details about each

segment (one for each job). - Memory Map Table monitors allocation of main

memory (one for whole system).

56

Segmentation Hardware

57

(No Transcript)

58

Pros Cons of Segmentation

- Compaction.

- External fragmentation.

- Secondary storage handling.

- Memory is allocated dynamically.

59

Segmented/Demand Paged Memory Allocation

- Evolved from combination of segmentation and

demand paging. - Logical benefits of segmentation.

- Physical benefits of paging.

- Subdivides each segment into pages of equal size,

smaller than most segments, and more easily

manipulated than whole segments. - Eliminates many problems of segmentation because

it uses fixed length pages.

60

4 Tables Are Used in Segmented/Demand Paging

- Job Table lists every job in process (one for

whole system). - Segment Map Table lists details about each

segment (one for each job). - E.g., protection data, access data.

- Page Map Table lists details about every page

(one for each segment). - E.g., status, modified, and referenced bits .

- Memory Map Table monitors allocation of page

frames in main memory (one for whole system).

61

MULTICES Address Translation Scheme

62

Intel 30386 Address Translation

63

Pros Cons of Segment/Demand Paging

- Overhead required for the extra tables

- Time required to reference segment table and page

table. - Logical benefits of segmentation.

- Physical benefits of paging

- To minimize number of references, many systems

use associative memory to speed up the process.

64

Associated Memory

- Associated memory parallel search

- Translation Look-aside Buffer (TLB)

65

Paging Hardware with TLB

66

Virtual Memory (VM)

- Even though only a portion of each program is

stored in memory, virtual memory gives appearance

that programs are being completely loaded in main

memory during their entire processing time. - Shared programs and subroutines are loaded on

demand, reducing storage requirements of main

memory. - VM is implemented through demand paging and

segmentation schemes.

67

Comparison of VM With Paging and With Segmentation

68

Advantages of VM

- Works well in a multiprogramming environment

because most programs spend a lot of time

waiting. - Jobs size is no longer restricted to the size of

main memory (or the free space within main

memory). - Memory is used more efficiently.

- Allows an unlimited amount of multiprogramming.

69

Advantages of VM (contd)

- Eliminates external fragmentation when used with

paging and eliminates internal fragmentation when

used with segmentation. - Allows a program to be loaded multiple times

occupying a different memory location each time. - Allows the sharing of code and data.

- Facilitates dynamic linking of program segments.

70

Disadvantages of VM

- Increased processor hardware costs.

- Increased overhead for handling paging

interrupts. - Increased software complexity to prevent

thrashing.

71

Key Terms

- address resolution

- associative memory

- demand paging

- displacement

- FIFO anomaly

- first-in first-out (FIFO) policy

- Job Table (JT)

- least-recently-used (LRU) policy

- locality of reference

- Memory Map Table (MMT)

- page

- page fault

- page fault handler

- page frame

- Page Map Table (PMT)

- page replacement policy

- page swap

- paged memory allocation

- reentrant code

- segment

- Segment Map Table (SMT)

72

Key Terms - 2

- segmented memory allocation

- segmented/demand paged memory allocation

- thrashing

- virtual memory

- working set