Conceptual Clustering - PowerPoint PPT Presentation

Title:

Conceptual Clustering

Description:

... attribute weighted by P(Ai = Vij), with k proposed categories, i attributes, j values/attribute ... Create a new class node done, can get next example ... – PowerPoint PPT presentation

Number of Views:113

Avg rating:3.0/5.0

Title: Conceptual Clustering

1

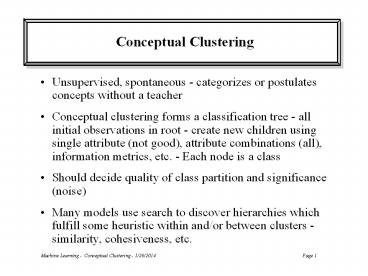

Conceptual Clustering

- Unsupervised, spontaneous - categorizes or

postulates concepts without a teacher - Conceptual clustering forms a classification tree

- all initial observations in root - create new

children using single attribute (not good),

attribute combinations (all), information

metrics, etc. - Each node is a class - Should decide quality of class partition and

significance (noise) - Many models use search to discover hierarchies

which fulfill some heuristic within and/or

between clusters - similarity, cohesiveness, etc.

2

Cobweb

- Cobweb is an incremental hill-climbing strategy

with bidirectional operators - not backtrack, but

could return in theory - Starts empty. Creates a full concept hierarchy

(classification tree) with each leaf representing

a single instance/object. You can choose how

deep in the tree hierarchy you want to go for the

specific application at hand - Objects described as nominal attribute-value

pairs - Each created node is a probabilistic concept (a

class) which stores probability of being matched

(count/total), and for each attribute,

probability of being on, P(avC), only counts

need be stored. - Arcs in tree are just connections - nodes store

info across all attributes (unlike ID3, etc.)

3

Category Utility Heuristic Measure

- Tradeoff between intra-class similarity and

inter-class dissimilarity - sums measures from

each individual attribute - Intra-class similarity a function of P(Ai

VijCk), Predictability of C given V - Larger P

means if class is C, A likely to be V. Objects

within a class should have similar attributes. - Inter-class dissimilarity a function of P(CkAi

Vij), Predictiveness of C given V - Larger P

means AV suggests instance is member of class C

rather than some other class. A is a stronger

predictor of class C.

4

Category Utility Intuition

- Both should be high over all (most) attributes

for a good class breakdown - Predictability P(VC) could be high for multiple

classes, giving a relatively low P(CV), thus not

good for discrimination - Predictiveness P(CV) could be high for a class,

while P(VC) is relatively low, due to V

occurring rarely, thus good for discrimination,

but not intra-class similarity - When both are high, get best categorization

balance between discrimination and intra-class

similarity

5

Category Utility

- For each category sum predictability times

predictiveness for each attribute weighted by

P(Ai Vij), with k proposed categories, i

attributes, j values/attribute - The expected number of attribute

- values one could guess given C

6

Category Utility

- Category Utility is the increase in expected

attributes that could be guessed, given a

partitioning of categories - leaf nodes. - CU(C1, C2, ... Ck)

- K normalizes CU for different numbers of

categories in the candidate partition - Since incremental, there is a limited number of

possible categorization partitions - If Ai Vij is independent (irrelevant) of class

membership, CU 0

7

Cobweb Learning Algorithm

- 1. Incrementally add a new training example

- 2. Recurse down the at root until new node with

just this example is added. Update appropriate

probabilities at each level. - 3. At each level of the tree calculate the

scores for all valid modifications using category

utility (CU) - 4. Depending on which of the following gives the

best score - Classify into an existing class - then recurse

- Create a new class node done, can get next

example - Combine two classes into a single class (Merging)

- then recurse - Divide a class into multiple classes (Splitting)

- then recurse

8

Cobweb Learning Mechanisms

- Classifying (Matching) - calculate overall CU for

each case of putting the example in a node at

current level - New Class - calculate overall CU for putting

example into a single new class- Note gradient

descent (greedy) nature. Does not go back and

try all possible new partitions. - If created from internal node, simply add

- If created from leaf node, split into two, one

for new and old - These alone are quite order dependent - splitting

and merging allow bi-directionality - ability to

undo

9

Cobweb Learning Mechanisms

- Merging - For best matching node (the one that

would be chosen for classification) and the

second best matching node at that level,

calculate CU when both are merged into one node,

with two children - Splitting - For best matching node, calculate CU

if that node were deleted and its children added

to the current level. - Both schemes could be extended to test other

nodes, at the cost of increased computational

complexity - Can overcome initial misconceptions

10

Cobweb Comments

- Generalization done by just executing recursive

classification step - Could use different variations on CU and search

strategy - Complexity O(AVB2logK) for each example, where B

is branching factor, A (attributes), V (average

number of values), K (classes) - Empirically, B usually between 2 and 5

- Does not directly handle noise - no defined

significance mechanism - Tends to make bushy trees, however high levels

should be most important class categories

(because of merge/split causing best breaks to

float up, though no optimal guarantee), and one

could just use nodes highest in the tree for

classification - Does not support continuous values

11

Extensions - Classit

- Cannot store probability counts for continuous

data - Classit uses a scheme similar to Cobweb, but

assumes normal distribution around an attribute

and thus can just store a mean and variance - not

always a reasonable assumption - Also uses a formal cut-off (significance)

mechanism to better support generalization and

noise handling (a class node can then include

outliers) - More work needed

![Conceptual Algebra Project [An Ed391 Web Tech Project] PowerPoint PPT Presentation](https://s3.amazonaws.com/images.powershow.com/7706158.th0.jpg?_=201603280112)